COMPANY DENIES ROBOTS FEED on the DEAD

http://wired.com/underwire/2009/07/military-researchers-develop-corpse-eating-robots/

“From the file marked ŌĆ£Evidently, many scientists have never seen even one scary sci-fi movieŌĆØ: The Defense Department is funding research into battlefield robots that power themselves by eating human corpses. What could possibly go wrong? Since they apparently donŌĆÖt own TVs or DVD players, researchers at Robotic Technology say the robots will collect organic matter, which ŌĆ£couldŌĆØ include human corpses, to use for fuel. But if you picked up anything on flesh-eating robots over the years you know theyŌĆÖll ignore that tasty soybean field and make a chow line right to the nearest dead body. And, if the machines canŌĆÖt find enough dead people to eat, they can always make new ones. Researchers seem to get a kick out of ensuring the demise of the human species, so the project is called the Energetically Autonomous Tactical Robot, or EATR. Wired.com readers looking to save time and trouble are invited to begin marinating themselves in a mix of 10W30 and Heinz 57 Sauce immediately.”

PRESS RELEASE DENIAL

“[Please click here for an Important Message Concerning EATR]”

http://robotictechnologyinc.com/images/upload/file/Cyclone%20Power%20Press%20Release%20EATR%20Rumors%20Final%2016%20July%2009.pdf

http://wired.com/dangerroom/2009/07/company-denies-its-robots-feed-on-the-dead/

by Noah Shachtman / July 17, 2009

I never imagined IŌĆÖd print a press release in full. Then, an hour ago, Howard Lovy e-mailed me the most incredible press release of all timeŌĆ” Pompano Beach, Fla.ŌĆō “In response to rumors circulating the internet on sites such as FoxNews.com, FastCompany.com and CNET News about a ŌĆ£flesh eatingŌĆØ robot project, Cyclone Power Technologies Inc. (Pink Sheets:CYPW) and Robotic Technology Inc. (RTI) would like to set the record straight: This robot is strictly vegetarian. On July 7, Cyclone announced that it had completed the first stage of development for a beta biomass engine system used to power RTIŌĆÖs Energetically Autonomous Tactical Robot (EATRŌäó), a Phase II SBIR project sponsored by the Defense Advanced Research Projects Agency (DARPA), Defense Sciences Office. RTIŌĆÖs EATR is an autonomous robotic platform able to perform long-range, long-endurance missions without the need for manual or conventional re-fueling. RTIŌĆÖs patent pending robotic system will be able to find, ingest and extract energy from biomass in the environment. Despite the far-reaching reports that this includes ŌĆ£human bodies,ŌĆØ the public can be assured that the engine Cyclone has developed to power the EATR runs on fuel no scarier than twigs, grass clippings and wood chips ŌĆō small, plant-based items for which RTIŌĆÖs robotic technology is designed to forage. Desecration of the dead is a war crime under Article 15 of the Geneva Conventions, and is certainly not something sanctioned by DARPA, Cyclone or RTI. “We completely understand the publicŌĆÖs concern about futuristic robots feeding on the human population, but that is not our mission,ŌĆØ stated Harry Schoell, CycloneŌĆÖs CEO. ŌĆ£We are focused on demonstrating that our engines can create usable, green power from plentiful, renewable plant matter. The commercial applications alone for this earth-friendly energy solution are enormous.ŌĆØ

Corporate Profiles

Cyclone Power Technologies is the developer of the award-winning Cyclone Engine ŌĆō an eco-friendly external combustion engine with the power and versatility to run everything from portable electric generators and garden equipment to cars, trucks and locomotives. Invented by company founder and CEO Harry Schoell, the patented Cyclone Engine is a modern day steam engine, ingeniously designed to achieve high thermal efficiencies through a compact heat-regenerative process, and to run on virtually any fuel – including bio-diesels, syngas or solar – while emitting fewer greenhouse gases and irritating pollutants into the air. Currently in its late stages of development, the Cyclone Engine was recognized by Popular Science Magazine as the Invention of the Year for 2008, and was presented with the Society of Automotive EngineersŌĆÖ AEI Tech Award in 2006 and 2008. Additionally, Cyclone was recently named Environmental Business of the Year by the Broward County Environmental Protection Department. For more information, visit www.cyclonepower.com.

Robotic Technology Incorporated (RTI), a Maryland, U.S.A. corporation chartered in 1985, provides systems and services in the fields of intelligent systems, robotic vehicles (including unmanned ground, air, and sea vehicles), robotics and automation, weapons systems, intelligent control systems, intelligent transportation systems, intelligent manufacturing, and other advanced technology for government, industry, and not-for-profit clients. Please visit

www.robotictechnologyinc.com for more information.

Safe Harbor Statement

Certain statements in this news release may contain forward-looking information within the meaning of Rule 175 under the Securities Act of 1933 and Rule 3b-6 under the Securities Exchange Act of 1934, and are subject to the safe harbor created by those rules. All statements, other than statements of fact, included in this release, including, without limitation, statements regarding potential future plans and objectives of the company, are forward-looking statements that involve risks and uncertainties. There can be no assurance that such statements will prove to be accurate and actual results and future events could differ materially from those anticipated in such statements. The company cautions that these forward-looking statements are further qualified by other factors. The company undertakes no obligation to publicly update or revise any statements in this release, whether as a result of new information, future events or otherwise.”

ARTICLE 15, GENEVA CONVENTION

http://www.hrweb.org/legal/geneva1.html#Article%2015

“Article 15. At all times, and particularly after an engagement, Parties to the conflict shall, without delay, take all possible measures to search for and collect the wounded and sick, to protect them against pillage and ill-treatment, to ensure their adequate care, and to search for the dead and prevent their being despoiled. Whenever circumstances permit, an armistice or a suspension of fire shall be arranged, or local arrangements made, to permit the removal, exchange and transport of the wounded left on the battlefield. Likewise, local arrangements may be concluded between Parties to the conflict for the removal or exchange of wounded and sick from a besieged or encircled area, and for the passage of medical and religious personnel and equipment on their way to that area.”

DARPA (cont.)

http://www.theregister.co.uk/2009/06/02/darpa_self_industry_day/

http://www.arl.army.mil/www/default.cfm?Action=29&Page=29

CYCLONE ALL-FUEL MOTORS

http://www.cyclonepower.com/

http://www.cyclonepower.com/technical_information.html

http://www.cyclonepower.com/whe.html

http://www.cyclonepower.com/better.html

“A traditional gas or diesel powered internal combustion engine ignites fuel under high pressure inside its cylinders ŌĆō a explosive process that requires precise fuel to air ratios. The Cyclone Engine is dramatically different. It burns its fuel in an external combustion chamber. Heat from this process is used to turn water into steam, which is what powers the engine. Because of the way we burn fuel ŌĆō in an external combustion chamber under atmospheric pressure — we have incredible flexibility as to the fuel we use. In combustion tests we have used fuels derived from orange peels, palm oil, cottonseed oil, algae, used motor oil and fryer grease, as well as traditional fossil fuels ŌĆ” none of which required any modification to our engine. We have also burned propane, butane, natural gas and even powdered coal. What does this mean? Well, imagine having the choice to run your car on gasoline one day and 100% pure biodiesel the next, or even a mixture of the two. The Cyclone Engine can provide consumers with the power to use fuels that are less expensive, more plentiful and locally produced. This is better for our economy, national security and global environment. Additionally, we have built engines that donŌĆÖt burn any fuel at all. Instead, we can recycle the heat from other sources such as ovens, furnaces, exhaust pipes or even solar collectors ŌĆō thermal energy that would otherwise be wasted into the environment. Our Waste Heat Engine harvests this external heat to produce mechanical energy which, in turn, can run an electric generator.”

EATR

http://www.robotictechnologyinc.com/index.php/EATR

http://www.robotictechnologyinc.com/images/upload/file/Presentation%20EATR%20Brief%20Overview%206%20April%2009.pdf

‘EQUIVALENT’ to EATING

http://www.alternet.org/blogs/peek/141329/flesh-eating_robots_developed_for_pentagon/

Flesh-Eating Robots Developed for Pentagon

By Tana Ganeva / July 15, 2009

Thanks to the Pentagon and a Maryland Robotics Company, the robots who inherit the Earth when humanity is wiped out will be able to survive by feasting on the flesh of human corpses! The Energetically Autonomous Tactical Robot (EATR — yes, EATR) is described thusly on the company’s website: “The purpose of the Energetically Autonomous Tactical Robot (EATR)Ōäó (patent pending) project is to develop and demonstrate an autonomous robotic platform able to perform long-range, long-endurance missions without the need for manual or conventional re-fueling, which would otherwise preclude the ability of the robot to perform such missions. The system obtains its energy by foraging ŌĆō engaging in biologically-inspired, organism-like, energy-harvesting behavior which is the equivalent of eating. It can find, ingest, and extract energy from biomass in the environment (and other organically-based energy sources), as well as use conventional and alternative fuels (such as gasoline, heavy fuel, kerosene, diesel, propane, coal, cooking oil, and solar) when suitable.”

Doesn’t sound that sinister, since dead bodies are artfully skirted around in the description. Then again, a presentation on the technology is accompanied by the picture above, which appears to show a giant robot calmly shooting the last rebel human aircraft out of the sky with its eyes … As Peter Singer, a defense analyst at the Brookings Institute and author of Wired for War, said after seeing a presentation about the technology: “I really hope Skynet doesnŌĆÖt learn about that kind of system.”

IF MACHINES COULD BALK / Taking Man Out Of The Loop

http://www.popsci.com/military-aviation-amp-space/article/2009-04/robots-war

http://wiredforwar.pwsinger.com/index.php?option=com_content&view=article&id=61&Itemid=54

http://wiredforwar.pwsinger.com/index.php?option=com_content&view=article&id=69&Itemid=71

http://bigthink.com/ideas/pw-singer-on-the-future-of-robotics-in-warfare

CONTACT

P.W. Singer

http://www.pwsinger.com/

http://wiredforwar.pwsinger.com/

http://www.pwsinger.com/articles.html

http://www.brookings.edu/experts/singerp.aspx

email : author [at] pwsinger [dot] com

CARNIVOROUS DOMESTIC ENTERTAINMENT ROBOTS

SPECULATIVE DESIGN

http://www.auger-loizeau.com/

http://www.newscientist.com/gallery/dn17367-carnivorous-domestic-entertainment-robots/

http://brightcove.newscientist.com/services/player/bcpid1873822884?bctid=27945753001

http://www.materialbeliefs.com/prototypes/cder.php

designed by James Auger, Jimmy Loizeau and Aleksandar Zivanovic

1. Lampshade robot : Flies and moths are naturally attracted to light. This lamp shade has holes based on the form of the pitcher plant enabling access for the insects but no escape. Eventually they expire and fall into the microbial fuel cell underneath. This generates the electricity to power a series of LEDs located at the bottom of the shade. These are activated when the house lights are turned off.

2. Mousetrap coffee table robot : A mechanised iris is built into the top of a coffee table. This is attached to a infra red motion sensor. Crumbs and food debris left on the table attract mice who gain access to the table top via a hole built into one over size leg. Their motion activates the iris and the mouse falls into the microbial fuel cell housed under the table. This generates the energy to power the iris motor and sensor.

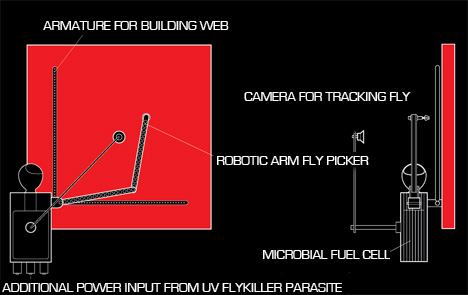

3. Fly stealing robot : This robot encourages spiders to build their webs within it’s armature. Flies that become trapped in the web are tracked by a camera. The robotic arm then moves over the dead fly, picks it up and drops it into the microbial fuel cell. This generates electricity to partially power the camera and robotic arm. This robot also relies on the UV fly killer parasite robot to supplement it’s energy needs.

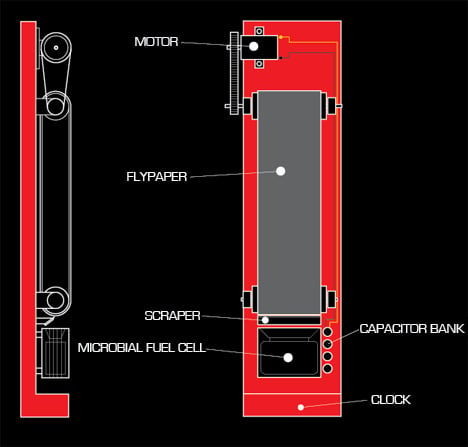

4. UV fly killer parasite : A microbial fuel cell is housed underneath an ultra violet fly killer. As the flies expire they fall into the fuel cell generating electricity that is stored in the capacitor bank. This energy is available for the fly stealing robot.

5. Fly-paper robotic clock : This robot uses flypaper on a roller mechanism to entrap insects. As the flypaper passes over a blade, captured insects are scraped into a microbial fuel cell. Electricity is generated to turn the rollers and power a small LCD clock.

MICROBIAL FUEL CELLS (MFC)

http://www.microbialfuelcell.org/www/

http://microbialfuelcell.wordpress.com/

http://en.wikipedia.org/wiki/Microbial_fuel_cell

http://www.brl.ac.uk/projects/ecobot/PublicationsAbstractFolder/EnzMicrobTech(2005).pdf

http://www.brl.ac.uk/projects/ecobot/PublicationsAbstractFolder/3rdBiolFuelCellsSymp(2008).pdf

Small scale MFCs ŌĆō Large scale power / By I.Ieropoulos, J.Greenman and C.Melhuish

Abstract : “This study reports on the findings from the investigation into small scale (6.25mL) Microbial Fuel Cells (MFC), connected together as a network of multiple units. The MFCs contained unmodified (no catalyst) carbon fibre electrodes and a standard ion-exchange membrane for the proton transfer from the anode to the cathode. A stack of four (4) of these units connected together were – in terms of volume – the equivalent of a single analytical size (25mL) MFC with the same unmodified electrode material and PEM, but produced a peak power density of 60mW/m2. This was 2 orders of magnitude higher than the power density produced from a single analytical size MFC. The anode microbial culture was of the type commonly found in domestic wastewater fed with 5mM acetate as the carbon-energy (C/E) source. The cultures had matured in the MFC environment for approximately 2 months before being re-inoculated in the experimental MFC units. The cathode was of the O2 diffusion open-to-air type, but for the purposes of the polarization experimental runs, the cathodic electrodes were moistened with ferricyanide solution, which performs more efficiently during the initial experimental stages. It is furthermore shown that polarity reversal is a function of MFC internal impedance. This occurs in series connected networks in which one or more MFCs have developed a different to the rest of the stack internal resistance. To the best of the authorsŌĆÖ knowledge, this is the first report on small scale MFCs producing such high power density figures.”

AVAILABLE NOW : ┬Ż53.00 (GBP)

http://www.ncbe.reading.ac.uk/NCBE/MATERIALS/MICROBIOLOGY/fuelcellassem.html

http://www.ncbe.reading.ac.uk/NCBE/MATERIALS/PDF/Price08.pdf

http://www.ncbe.reading.ac.uk/NCBE/MATERIALS/MICROBIOLOGY/fuelcell.html

“With readily-available chemicals (such as methylene blue), the fuel cell can be used to generate a small electrical current from the metabolic activities of ordinary yeast! Fuel cells like this are now used by a leading UK brewery to test the activity of the yeast used for their ales. The NCBE’s improved high-quality cell is supplied with neoprene gaskets, carbon fibre electrode material, cation-exchange membrane and an illustrated instruction booklet. The microbial fuel cell is ideal for investigations of respiration and students have even won prizes with it at international science fairs.”

FLY-POWERED FLYTRAP

http://www.newscientist.com/article/dn6366-selfsustaining-killer-robot-creates-a-stink.html

Self-sustaining killer robot creates a stink

By Duncan Graham-Rowe / 09 September 2004

It may eat flies and stink to high heaven, but if this robot works, it will be an important step towards making robots fully autonomous. To survive without human help, a robot needs to be able to generate its own energy. So Chris Melhuish and his team of robotics experts at the University of the West of England in Bristol are developing a robot that catches flies and digests them in a special reactor cell that generates electricity. So what is the downside? The robot will most likely have to attract the hapless flies by using a stinking lure concocted from human excrement. Called EcoBot II, the robot is part of a drive to make “release and forget” robots that can be sent into dangerous or inhospitable areas to carry out remote industrial or military monitoring of, say, temperature or toxic gas concentrations. Sensors on the robot feed a data logger that periodically radios the results back to a base station.

Exoskeleton electricity

The robot’s energy source is the sugar in the polysaccharide called chitin that makes up a fly’s exoskeleton. EcoBot II digests the flies in an array of eight microbial fuel cells (MFCs), which use bacteria from sewage to break down the sugars, releasing electrons that drive an electric current (see graphic). In its present form, EcoBot II still has to be manually fed fistfuls of dead bluebottles, but the ultimate aim of the UWE robotics team is to make the droid predatory, using sewage as a bait to catch the flies. “One of the great things about flies is that you can get them to come to you,” says Melhuish. The team has yet to tackle this, but speculates that it would involve using a bottleneck-style flytrap with some form of pump to suck the flies into the digestion chambers. With a top speed of 10 centimetres per hour, EcoBot II’s roving prowess is still modest to say the least. “Every 12 minutes it gets enough energy to take a step forwards two centimetres and send a transmission back,” says Melhuish. But it does not need to catch too many flies to do so, says team member Ioannis Ieropoulos. In tests, EcoBot II travelled for five days on just eight fat flies – one in each MFC.

Donated sewage

So how do flies get turned into electricity? Each MFC comprises an anaerobic chamber filled with raw sewage slurry – donated by UWE’s local utility, Wessex Water. The flies become food for the bacteria that thrive in the slurry. Enzymes produced by the bacteria break down the chitin to release sugar molecules. These are then absorbed and metabolised by the bacteria. In the process, the bacteria release electrons that are harnessed to create an electric current. Previous efforts to use carnivorous MFCs to drive a robot included an abortive UWE effort: the Slugbot. This was designed to hunt slugs on farms by using imaging systems to spot and grab the pests, and then deliver them to a digester that produces methane to power a fuel cell. The electricity generated would have been used to charge the Slugbot when it arrived at a docking station. But the methane-based system took too long to produce power, and the team realised that MFCs offered far more promise. Elsewhere, researchers in Florida created a train-like robot dubbed Chew Chew (New Scientist print edition, 22 July 2000) that used MFCs to charge a battery, but the bacteria had to be fed on sugar cubes. For an autonomous robot to survive in the wild, relying on such refined foodstuffs is not an option, says Melhuish. EcoBot II, on the other hand, is the first robot to use unrefined fuel. Just do not stand downwind.

DEAD FLIES

http://www.brl.ac.uk/projects/ecobot/PublicationsAbstractFolder/AutonRob(2006).pdf

http://www.brl.ac.uk/projects/ecobot/PublicationsAbstractFolder/AdvRobSys(2005).pdf

http://www.brl.ac.uk/projects/ecobot/PublicationsAbstractFolder/TAROS(2005).pdf

Artificial symbiosis: Towards a robot-microbe partnership

By Ioannis Ieropoulos, Chris Melhuish, John Greenman, Ian Horsfield

Abstract : “The development of the robot EcoBot II, which exhibits some partial form of energetic autonomy, is reported. Microbial Fuel Cells were used as the onboard self-sustaining power supply, which incorporated bacterial cultures from sewage sludge and employed oxygen from free air for oxidation in the cathode. This robot was able to perform phototaxis, temperature sensing and wireless transmission of sensed data when fed (amongst other substrates) with flies. This is the first robot in the world, to utilise unrefined substrate, oxygen from free air and perform (three) different token tasks. The work presented in this paper focuses on the combination of flies (substrate) and oxygen (cathode) to power the EcoBot II.”

CONTACT

http://westengland.academia.edu/IoannisYannisIeropoulos

http://people.brl.ac.uk/people/template.jsp?username=i-ieropo

email : Ioannis.Ieropoulos [at] brl.ac [dot] uk

ECOBOT PAPERS

http://en.scientificcommons.org/43546485

http://en.scientificcommons.org/42318299

ECOBOT III : ENERGY AUTONOMY

http://www.brl.ac.uk/projects/ecobot/ecobot%20III/index.html

http://www.brl.ac.uk/projects/ecobot/artificial%20gill/artificial%20gill.html

http://www.brl.ac.uk/projects/ecobot/index.html

http://www.brl.ac.uk/research.html

http://www.brl.ac.uk/gallery.html

http://www.brl.ac.uk/projects/ecobot/PublicationsAbstractFolder/EnzMicrobTech(2009).pdf

‘Electricity from landfill leachate using microbial fuel cells’ / By J.Greenman, A.G├Īlvez, L.Giusti, I.Ieropoulos

CARNIVOROUS ROBOTS DO EXIST

http://news.nationalgeographic.com/news/2006/03/0331_060331_robot_flesh.html

Bug-Eating Robots Use Flies for Fuel

BY Sean Markey / March 31, 2006

At the Bristol Robotics Laboratory in England, researchers are designing their newest bug-eating robotŌĆöEcobot III. The device is the latest in a series of small robots to emerge from the lab that are powered by a diet of insects and other biomass. “We all talk about robots being able to do stuff on their own, being intelligent and autonomous,” said lab director Chris Melhuish. “But the truth of the fact is that without energy, they can’t do anything at all.” Most robots today draw that energy from electrical cords, solar panels, or batteries. But in the future some robots will need to operate beyond the reach of power grids, sunlight, or helping human hands. Melhuish and his colleagues think such release-and-forget robots can satisfy their energy needs the same way wild animals doŌĆöby foraging for food. “Animals are the proof that this is possible,” he said.

Slugbot

Over the last decade, Melhuish’s team has produced a string of bots powered by sugar, rotten apples, or dead flies. The biomass is converted into electricity through a series of stomachlike microbial fuel cells, or MFCs. Living batteries, MFCs generate power from colonies of bacteria that release electrons as the microorganisms digest plant and animal matter. (Electricity is simply a flow of electrons.) The lab’s first device, named Slugbot, was an artificial predator that hunted for common garden slugs. While Slugbot never digested its prey, it laid the groundwork for future bots powered by biomass. In 2004 researchers unveiled Ecobot II. About the size of a dessert plate, the device could operate for 12 days on a diet of eight flies. “The flies [were] given as a whole insect to each of the fuel cells on board the robot,” said Ioannis Ieropoulos, who co-developed Ecobot II as part of his Ph.D. research. With its capacitors charged, the bot could roll 3 to 6 inches (8 to 16 centimeters) an hour, moving toward light while recording temperature. It sent data via a radio transmitter. While hardly a speedster, Ecobot II was the first robot powered by biomass that could sense its world, process it, act in it, and communicate, Melhuish says. The scientist sees analogs in the autonomously powered robots of the future. “If you really do want robots that are going to ŌĆ” monitor fences, [oceans], pollution levels, or carbon dioxideŌĆöall of those thingsŌĆöwhat you need are very, very cheap little robots,” he said. “Now our robots are quite big. But in 20 to 30 years time, they could be quite minuscule.”

More Power

Whether microbial fuel-cell technology can advance enough to power those robots, however, is unclear. Stuart Wilkinson, a mechanical engineer at the University of South Florida in Tampa, developed the world’s first biomass-powered robot, a toy-train-like bot nicknamed Chew-Chew that ran on sugar cubes. He says the major drawback of MFCs is that it takes a big fuel cell to produce a small amount of power. Most AA batteries, for example, produce far more power than a single MFC. “MFCs are capable of running low-power electronics, but are not well suited to power-hungry motors needed for machine motion,” Wilkinson said in an email interview. He added that scientists “need to develop MFC technology further before it can be of much practical use for robot propulsion.” Ieropoulos, Ecobot II’s co-developer, agrees that MFCs need a power boost. He and his colleagues are exploring ways to improve the materials used in MFCs and to maintain resident microbes at their peak.

Bot Weaning

To date, the Bristol team has hand-fed its bots. But if the researchers are going to realize their vision of autonomously powered robots, then the machines will need to start gathering their own food. When Ecobot II debuted in 2004, Melhuish suggested one way that it might lure and capture its fly food-source: a combination fly-trap/suction pump baited with pheromones. Whether the accessory will appear in Ecobot III is anyone’s guess. The BRL team remains tight-lipped about their current project, preferring to finish their work in secret before discussing it publicly. Melhuish will say this, however: “What we’ve got to do is develop a better digestion system ŌĆ” . There are many, many problems that have to be overcome, and waste removal is one of them.”

YEAST-POWERED FUEL CELLS

http://www.newscientist.com/article/dn16882-yeastpowered-fuel-cell-feeds-on-human-blood.html

SEWAGE SLURRY AS FUEL

http://www.newscientist.com/article/dn4761-plugging-into-the-power-of-sewage.html

PLANKTON-EATING SUBMARINES

http://www.newscientist.com/article/mg19125715.900-plankton-could-power-robotic-submarines.html

MACHINES BREAK

http://www.wired.com/dangerroom/2007/10/video-robo-weap/

By Noah Shachtman / October 18, 2007

“The tragedy in South Africa that killed nine soldiers isnŌĆÖt the first time a robotic weapon has spun out of control. HereŌĆÖs a video I obtained a few years back, showing a XM-101 Common Remotely Operated Weapons Station connected to an Apache chaingun, emptying its magazine of 30 mm high explosive rounds ŌĆö and then turning towards the camera, looking for new targets to nail. IŌĆÖm told ŌĆö but cannot confirm ŌĆö that this footage was shot during a demonstration for VIPs, and that several members of Congress wouldŌĆÖve been in serious jeopardy, had the weapon not run out of ammo. {thanks to info from KS, who “was there when it happened, and I was lying flat on the ground together with US Army officers.}”

[youtube=https://www.youtube.com/watch?v=u7poF0M7H5M]

BAD CODE

http://www.itweb.co.za/sections/business/2007/0710161034.asp?S=IT%20in%20Defence&A=DFN&O=FPTOP

Did software kill soldiers?

BY Leon Engelbrecht / 16 October 2007

The National Defence Force is probing whether a software glitch led to an antiaircraft cannon malfunction that killed nine soldiers and seriously injured 14 others during a shooting exercise on Friday. SA National Defence Force spokesman brigadier general Kwena Mangope says the cause of the malfunction is not yet known and will be determined by a Board of Inquiry. The police are conducting a separate investigation into the incident. Media reports say the shooting exercise, using live ammunition, took place at the SA Army’s Combat Training Centre, at Lohatlha, in the Northern Cape, as part of an annual force preparation endeavour. Mangope told The Star that it ŌĆ£is assumed that there was a mechanical problem, which led to the accident. The gun, which was fully loaded, did not fire as it normally should have,” he said. “It appears as though the gun, which is computerised, jammed before there was some sort of explosion, and then it opened fire uncontrollably, killing and injuring the soldiers.” Other reports have suggested a computer error might have been to blame. Defence pundit Helmoed-R├Čmer Heitman told the Weekend Argus that if ŌĆ£the cause lay in computer error, the reason for the tragedy might never be foundŌĆØ. Electronics engineer and defence company CEO Richard Young says he can’t believe the incident was purely a mechanical fault. He says his company, C2I2, in the mid 1990s, was involved in two air defence artillery upgrade programmes, dubbed Projects Catchy and Dart.

Software details

During the shooting trials at Armscor’s Alkantpan shooting range, ŌĆ£I personally saw a gun go out of control several times,ŌĆØ Young says. ŌĆ£They made a temporary rig consisting of two steel poles on each side of the weapon, with a rope in between to keep the weapon from swinging. The weapon eventually knocked the polls down.ŌĆØ Young says he was also told at the time that the gun’s original equipment manufacturer, Oerlikon, had warned that the GDF Mk V twin 35mm cannon system was not designed for fully automatic control. Yet the guns were automated. At the time, SA was still subject to an arms embargo and Oerlikon played no role in the upgrade. ŌĆ£If I was an engineer on the Board of Inquiry, I would ask for all details about the software for the fire control system and gun drives,ŌĆØ Young says. ŌĆ£If it was not a mechanical or operating system error, you must find out which company developed the software and did the upgrade.ŌĆØ

Young says in the 1990s the defence force’s acquisitions agency, Armscor, allocated project money on a year-by-year basis, meaning programmes were often rushed. ŌĆ£It would not surprise me if major shortcuts were taken in the qualification of the upgrades. A system like that should never fail to the dangerous mode [rather to the safe mode], except if it was a shoddy design or a shoddy modification. ŌĆ£I think there have been multiple failures here; in software and the absence of interlocking safeguards.ŌĆØ He asks if the guns were given arcs of fire and whether these were enforced with electromechanical end stops. ŌĆ£On a firing range you don’t want guns to fire through 360 degrees.ŌĆØ Oerlikon’s local agent, Intertechnic, did not respond to requests for comment. The SANDF said investigations were still under way. The air defence artillery will, in the next two years, receive new missiles, radar and computer-based fire control equipment worth R3 billion as part of projects Guardian and Protector.

ASIMOV’S THREE LAWS of ROBOTICS, AMENDED

http://www.wired.com/dangerroom/2007/10/robot-cannon-ki/

http://en.wikipedia.org/wiki/Three_Laws_of_Robotics

http://www.asimovonline.com/oldsite/essay_guide.html#robotics

http://robots.net/article/2885.html

http://www.physorg.com/news164887377.html

https://www.youtube.com/watch?v=51H8rZ7TJeg

PSYCHOPATHIC ROBOTS, BY DEFINITION

http://works.bepress.com/cgi/viewcontent.cgi?article=1000&context=weng_yueh_hsuan

http://www.wired.com/gadgetlab/2009/07/robo-ethics/

Two years ago, a military robot used in the South African army killed nine soldiers after a malfunction. Earlier this year, a Swedish factory was fined after a robot machine injured one of the workers (though part of the blame was assigned to the worker). Robots have been found guilty of other smaller offenses such as an incorrectly responding to a request. So how do you prevent problems like this from happening? Stop making psychopathic robots, say robot experts. ŌĆ£If you build artificial intelligence but donŌĆÖt think about its moral sense or create a conscious sense that feels regret for doing something wrong, then technically it is a psychopath,ŌĆØ says Josh Hall, a scientist who wrote the book Beyond AI: Creating the Conscience of a Machine. For years, science fiction author Issac AsimovŌĆÖs Three Laws of Robotics were regarded as sufficient for robotics enthusiasts. The laws, as first laid out in the short story ŌĆ£Runaround,ŌĆØ were simple: A robot may not injure a human being or allow one to come to harm; a robot must obey orders given by human beings; and a robot must protect its own existence. Each of the laws takes precedence over the ones following it, so that under AsimovŌĆÖs rules, a robot cannot be ordered to kill a human, and it must obey orders even if that would result in its own destruction. But as robots have become more sophisticated and more integrated into human lives, AsimovŌĆÖs laws are just too simplistic, says Chien Hsun Chen, coauthor of a paper published in the International Journal of Social Robotics last month. The paper has sparked off a discussion among robot experts who say it is time for humans to get to work on these ethical dilemmas.

Accordingly, robo-ethicists want to develop a set of guidelines that could outline how to punish a robot, decide who regulates them and even create a ŌĆØlegal machine languageŌĆØ that could help police the next generation of intelligent automated devices. Even if robots are not entirely autonomous, there needs to be a clear path of responsibility laid out for their actions, says Leila Katayama, research scientist at open-source robotics developer Willow Garage. ŌĆ£We have to know who takes credit when the system does well and when it doesnŌĆÖt,ŌĆØ she says. ŌĆ£That needs to be very transparent.ŌĆØ A human-robot co-existence society could emerge by 2030, says Chen in his paper. Already iRobotŌĆÖs Roomba robotic vacuum cleaner and Scooba floor cleaner are a part of more than 3 million American households. The next generation robots will be more sophisticated and are expected to provide services such as nursing, security, housework and education. These machines will have the ability to make independent decisions and work reasonably unsupervised. ThatŌĆÖs why, says Chen, it may be time to decide who regulates robots.

The rules for this new world will have to cover how humans should interact with robots and how robots should behave. Responsibility for a robotŌĆÖs actions is a one-way street today, says Hall. ŌĆ£So far, itŌĆÖs always a case that if you build a machine that does something wrong it is your fault because you built the machine,ŌĆØ he says. ŌĆ£But thereŌĆÖs a clear day in the future that we will build machines that are complex enough to make decisions and we need to be ready for that.ŌĆØ Assigning blame in case of a robot-related accident isnŌĆÖt always straightforward. Earlier this year, a Swedish factory was fined after a malfunctioning robot almost killed a factory worker who was attempting to repair the machine generally used to lift heavy rocks. Thinking he had cut off the power supply, the worker approached the robot without any hesitation but the robot came to life and grabbed the victimŌĆÖs head. In that case, the prosecutor held the factory liable for poor safety conditions but also lay part of the blame on the worker. ŌĆ£Machines will evolve to a point where we will have to increasingly decide whether the fault for doing something wrong lies with someone who designed the machine or the machine itself,ŌĆØ says Hall. Rules also need to govern social interaction between robots and humans, says Henrik Christensen, head of robotics at Georgia Institute of TechnologyŌĆÖs College of Computing. For instance, robotics expert Hiroshi Ishiguro has created a bot based on his likeness. ŌĆ£There we are getting into the issue of how you want to interact with these robots,ŌĆØ says Christensen. ŌĆ£Should you be nice to a person and rude to their likeness? Is it okay to kick a robot dog but tell your kids to not do that with a normal dog? How do you tell your children about the difference?ŌĆØ

Christensen says ethics around robot behavior and human interaction is not so much to protect either, but to ensure the kind of interaction we have with robots is the ŌĆ£right thing.ŌĆØ Some of these guidelines will be hard-coded into the machines, others will become part of the software and a few will require independent monitoring agencies, say experts. That will also require creating a ŌĆ£legal machine language,ŌĆØ says Chen. That means a set of non-verbal rules, parts or all of which can be encoded in the robots. These rules would cover areas such as usability that would dictate, for instance, how close a robot can come to a human under various conditions, and safety guidelines that would conform to our current expectations of what is lawful. Still the efforts to create a robot that can successfully interact with humans over time will likely be incomplete, say experts. ŌĆ£People have been trying to sum up what we mean by moral behavior in humans for thousands of years,ŌĆØ says Hall. ŌĆ£Even if we get guidelines on robo-ethics the size of the federal code it would still fall short. Morality is impossible to write in formal terms.ŌĆØ

CONTACT

Yueh-Hsuan Weng

http://www.yhweng.tw/

http://works.bepress.com/weng_yueh_hsuan/

email : yhweng.cs94g@nctu.edu.tw

CODING an ETHICAL PATCH / Embedding Ethics Into Military Robots

http://www.cc.gatech.edu/ai/robot-lab/online-publications/formalizationv35.pdf

http://www.cc.gatech.edu/ai/robot-lab/online-publications/ArkinUlamTechReport2009.pdf

http://www.cc.gatech.edu/ai/robot-lab/online-publications/formalizationv35.pdf

http://www.cc.gatech.edu/ai/robot-lab/online-publications/techinwar-arkin-final.pdf

CONTACT

Ronald Arkin

http://www.cc.gatech.edu/ai/robot-lab/

http://www.cc.gatech.edu/aimosaic/faculty/arkin/

email : arkin [at] gatech [dot] edu

ARTIFICIAL CONSCIENCE

http://www.cc.gatech.edu/ai/robot-lab/ethics/

http://www.cc.gatech.edu/ai/robot-lab/ethics/#pub

http://www.cc.gatech.edu/aimosaic/robot-lab/research/MissionLab/

http://www.cc.gatech.edu/ai/robot-lab/ethics/#multi

PROGRAMMING GUILT

http://hplusmagazine.com/articles/robotics/teaching-robots-rules-war

http://news.cnet.com/8301-11424_3-10278435-90.html

http://news.cnet.com/8301-11386_3-10281328-76.html

Q&A: Robotics engineer aims to give robots a humane touch

by Dara Kerr / July 8, 2009

“Right now, we are looking at designing systems that can comply with internationally prescribed laws of war and our own codes of conduct and rules of engagement. We’ve decided it is important to embed in these systems with the moral emotion of guilt.” –Ronald Arkin

Can robots be more humane than humans in fighting wars? Robotics engineer Ronald Arkin of the Georgia Institute of Technology believes this is a not-too-distant possibility. He has just finished a three-year contract with the U.S. Army designing software to create ethical robots.

As robots are increasingly being used by the U.S. military, Arkin has devoted his lifework to configuring robots with a built-in “guilt system” that eventually could make them better at avoiding civilian casualties than human soldiers. These military robots would be embedded with internationally prescribed laws of war and rules of engagement, such as those in the Geneva Conventions.

Arkin talked with CNET News about how robots can be ethically programmed and some of the philosophical questions that come up when using machines in warfare. Below is an edited excerpt of our conversation.

Q: What made you first begin thinking about designing software to create ethical robots?

Arkin: I’d been working in robotics for almost 25 years and I noticed the successes that had been happening in the field. Progress had been steady and sure and it started to dawn on me that these systems are ready, willing, and able to begin going out into the battlefield on behalf of our soldiers. Then the question came up–what is the right ethical basis for these systems? How are we going to ensure that they could behave appropriately to the standards we set for our human war fighters?

In 2004, at the first international symposium on roboethics in Sanremo, Italy, we had speakers from the Vatican, the Geneva Conventions, the Pugwash Institute, and it became clear that this was a pressing problem. Trying to view myself as a responsible scientist, I felt it was important to do something about it and that got me embarked on this quest.

Q. What do you mean by an ethical robot? How would a robot feel empathy?

Arkin: I didn’t say it would feel empathy, I said ethical. Empathy is another issue and that is for a different domain. We are talking about battlefield robots in the work I am currently doing. That is not to say I’m not interested in those other questions and I hope to move my research, in the future, in that particular direction. Right now, we are looking at designing systems that can comply with internationally prescribed laws of war and our own codes of conduct and rules of engagement. We’ve decided it is important to embed in these systems with the moral emotion of guilt. We use this as a means of downgrading the robots’ ability to engage targets if it is acting in ways which exceed the predicted battle damage in certain circumstances.

Q. You’ve written about a built-in “guilt system.” Is this what you’re talking about?

Arkin: We have incorporated a component called an “ethical adaptor” by studying the models of guilt that human beings have and embedding those within a robotic system. The whole purpose of this is very focused and what makes it tractable is that we’re dealing with something called “bounded morality,” which is understanding the particular limit of the situation that the robot is to operate in. We have thresholds established for analogs of guilt that cause the robot to eventually refuse to use certain classes of weapons systems (or refuse to use weapons entirely) if it gets to a point where the predictions it’s making are unacceptable by its own standards.

Q. You, the engineer, decide the ethics, right?

Arkin: We don’t engineer the ethics; the ethics come from treaties that have been designed by lawyers and philosophers. These have been codified over thousands of years and now exist as international protocol. What we engineer is translating those laws and rules of engagement into actionable items that the robot can understand and work with.

Q. So, right now, you’re working on software for the ethical robot and you have a contract with the U.S. Army, right?

Arkin: We actually just finished, as of (July 1), the three-year project we had for the U.S. Army, which was designing prototype software for the U.S. Army Research Office. This isn’t software that is intended to go into the battlefield anytime soon–it is all proof of concept–we are striving to show that the systems can potentially function with an ethical basis. I believe our prototype design has demonstrated that.

Q. Robot drones like land mine detectors are already used by the military, but are controlled by humans. How would an autonomous robot be different in the battlefield?

Arkin: Drones usually refer to unmanned aero vehicles. Let me make sure we’re talking about the same sort of thing–you’re talking about ground vehicles for detecting improvised explosive devices?

Q. Either one, either air or land.

Arkin: Well, they’d be used in different ways. There are already existing autonomous systems that are either in development or have been deployed by the military. It’s all a question of how you define autonomy. The trip-wire for how we talk about autonomy, in this context, is whether an autonomous system (after detecting a target) can engage that particular target without asking for any further human intervention at that particular point. There is still a human in the loop when we tell a squad of soldiers to go into a building and take it using whatever force is necessary. That is still a high-level command structure, but the soldiers have the ability to engage targets on their own. With the increased battlefield tempo, things are moving much faster than they did 40 or 100 years ago, and it becomes harder for humans to make intelligent, rational decisions. As such, it is my contention that these systems, ultimately, can lead to a reduction in non-combatant fatalities over human level performance. That’s not to say that I don’t have the utmost respect for our war fighters in the battlefield, I most certainly do and I’m committed to provide them with the best technical equipment in support of their efforts as well.

Q. In your writing you say robots can be more humane than humans in the battlefield, can you elaborate on this?

Arkin: Well, I say that’s my thesis, it’s not a conclusion at this point. I don’t believe unmanned systems will be able to be perfectly ethical in the battlefield, but I am convinced (at this point in time) that they can potentially perform more ethically than human soldiers are capable of. I’m talking about wars 10 to 20 years down the field. Much more research and technology has to be developed for this vision to become a reality. But, I believe it’s an important avenue of pursuit for military research. So, if warfare is going to continue and if autonomous systems are ultimately going to be deployed, I believe it is crucial that we must have ethical systems in the battlefield. I believe that we can engineer systems that perform better than humans–we already have robots that are stronger than people, faster than people, and if you look at computers like Deep Blue we have robots that can be smarter than people. I’m not talking about replacing a human soldier in all aspects; I’m talking about using these systems in limited circumstances such as counter sniper operations or taking buildings. Under those circumstances we can engineer enough morality into them that they may indeed do better than human beings can–that’s the benchmark I’m working towards.

Q. Ok, what kind of errors could a military robot make in the battlefield in regards to ethical dilemmas?

Arkin: Well, a lot of this has been sharpened by debates with my colleagues in philosophy, computer science, and computer professionals for social responsibility. There are a lot of things that could potentially go wrong. One of the big questions (much of this is derived from what’s called “just war theory”) is responsibility–if there is a war crime, someone must be to blame. We have worked hard within our system to make sure that responsibility attribution is as clear as possible using a component called the “responsibility advisor.” To me, you can’t say the robot did it; maybe it was the soldier who deployed it, the commanding officer, the manufacturer, the designer, the scientist (such as myself) who conceived of it, or the politicians that allowed this to be used. Somehow, responsibility must be attributed. Another aspect is that technological advancement in the battlefield may make us more likely to enter into war. To me it is not unique to robotics–whenever you create something that gives you any kind of advantage, whether it is gun powder or a bow and arrow, the temptation to go off to war is more likely. Hopefully our nation has the wherewithal to be able to resist such temptation. Some argue it can’t be done right, period–it’s just too hard for machines to discriminate. I would agree it’s too hard to do it now. But with the advent of new sensors and network centric warfare where all this stuff is wired together along with the global information grid, I believe these systems will have more information available to them than any human soldier could possibly process and manage at a given point in time. Thus, they will be able to make better informed decisions. The military is concerned with squad cohesion. What happens to the “band of brothers” effect if you have a robot working alongside with a squad of human soldiers, especially if it’s one that might report back on moral infractions it observes with other soldiers in the squad? My contention is that if a robot can take a bullet for me, stick its head around a corner for me and cover my back better than Joe can, then maybe that is a small risk to take. Secondarily it can reduce the risk of human infractions in the battlefield by its mere presence. The military may not be happy with a robot with the capability of refusing an order. So we have to design a system that can explain itself. With some reluctance, I have designed an override capability for the system. But the robot will still inform the user on all the potential ethical infractions that it believes it would be making, and thus force the responsibility on the human. Also, when that override is taken, the aberrant action could be sent immediately to command for after-action review.

Q. There is a congressional mandate requiring that by 2010, one third of all operational deep-strike aircraft be unmanned and, by 2015, one third of all ground combat vehicles be unmanned. How soon could we see this autonomous robot software being used in the field?

Arkin: There is a distinction between unmanned systems and autonomous unmanned systems. That’s the interesting thing about autonomy–it’s kind of a slippery slope, decision making can be shared.

First, it can be under pure remote control by a human being. Next, there’s mixed initiative where some of the decision making rests in the robot and some of it rests in the human being. Then there’s semi-autonomy where the robot has certain functions and the human deals with it in a slightly different way. Finally, you can get more autonomous systems where the robot is tasked, it goes out and does its mission, and then returns (not unlike what you would expect from a pilot or a soldier).

The congressional mandate was marching orders for the Pentagon and the Pentagon took it very seriously. It’s not an absolute requirement but significant progress is being made. Some of the systems are far more autonomous than others–for example the PackBot in Iraq is not very autonomous at all. It is used for finding improvised explosive devices by the roadside, many of these have saved the lives of our soldiers by taking the explosion on their behalf.

Q. You just came out with a book called “Governing Lethal Behavior in Autonomous Robots.” Do you want to explain in a bit more detail what it’s about?

Arkin: Basically the book covers the space of this three-year project that I just finished. It deals with the basis, motivation, underlying philosophy, and opinions people have based on a survey we did for the Army on the use of lethal autonomous robots in the battlefield. It provides the mathematical formalisms underlying the approach we take and deals with how it is to be represented internally within the robotic system. And, most significantly, it describes several scenarios in which I believe these systems can be used effectively with some preliminary prototype results showing the progress we made in this period.

FLESH-EATING ROBOT STORY COMMENT ANALYSIS

http://www.cracked.com/blog/which-site-has-the-stupidest-commenters-on-the-internet/

“To see how commenter intelligence varies across different sites, IŌĆÖve created a scientifical method of analysis. By choosing a single story that multiple sites have reported onŌĆōFlesh Eating RobotsŌĆōIŌĆÖd be able to observe how different communities respond to the same stimulus.”

from reddit

Pfmohr2: “Once again, this is being taken completely too far, without proper context. Technically the title is not incorrect, but pretty damn close. This thing runs off of a biomass boiler. Now, IŌĆÖm sure you all understand how a boiler works; heat+water=steam. The steam would be used to power the machine. Now, how does one create heat? Burning things. What is burned? Combustibles aka biomass. Namely wood. If you look at the designs on the claw, there is an attached chainsaw. That is because the main source of fuel for this bad boy will be wood. Technically bodies could be used, but quite frankly they are not going to burn with the consistency and intensity needed to keep that boiler going. I have literally not seen a single link to this on reddit which does not claim this thing will be fueled by bodies; technically possible, but far from the ideal fuel.”

hideogumpa: “As long as the ambulance version puts patient life above hunger, IŌĆÖm cool with it.”

from the guardian

MartynInEurope: “Trust the yanks to reinvent the goat.”

FOX COVERAGE BEFORE

http://www.foxnews.com/story/0,2933,532492,00.html

“It could be a combination of 19th-century mechanics, 21st-century technology ŌĆö and a 20th-century horror movie. A Maryland company under contract to the Pentagon is working on a steam-powered robot that would fuel itself by gobbling up whatever organic material it can find ŌĆö grass, wood, old furniture, even dead bodies. Robotic Technology Inc.’s Energetically Autonomous Tactical Robot ŌĆö that’s right, “EATR” ŌĆö “can find, ingest, and extract energy from biomass in the environment (and other organically-based energy sources), as well as use conventional and alternative fuels (such as gasoline, heavy fuel, kerosene, diesel, propane, coal, cooking oil, and solar) when suitable,” reads the company’s Web site. That “biomass” and “other organically-based energy sources” wouldn’t necessarily be limited to plant material ŌĆö animal and human corpses contain plenty of energy, and they’d be plentiful in a war zone.”

FOX COVERAGE AFTER

http://www.foxnews.com/story/0,2933,533382,00.html

Biomass-Eating Military Robot Is a Vegetarian, Company Says / 7.16.09

“A steam-powered, biomass-eating military robot being designed for the Pentagon is a vegetarian, its maker says. Robotic Technology Inc.’s Energetically Autonomous Tactical Robot ŌĆö that’s right, “EATR” ŌĆö “can find, ingest, and extract energy from biomass in the environment (and other organically-based energy sources), as well as use conventional and alternative fuels (such as gasoline, heavy fuel, kerosene, diesel, propane, coal, cooking oil, and solar) when suitable,” reads the company’s Web site. But, contrary to reports, including one that appeared on FOXNews.com, the EATR will not eat animal or human remains. Dr. Bob Finkelstein, president of RTI and a cybernetics expert, said the EATR would be programmed to recognize specific fuel sources and avoid others. ŌĆ£If itŌĆÖs not on the menu, itŌĆÖs not going to eat it,ŌĆØ Finkelstein said. ŌĆ£There are certain signatures from different kinds of materialsŌĆØ that would distinguish vegetative biomass from other material.”

RTI said Thursday in a press release: “Despite the far-reaching reports that this includes ŌĆ£human bodies,ŌĆØ the public can be assured that the engine Cyclone (Cyclone Power Technologies Inc.) has developed to power the EATR runs on fuel no scarier than twigs, grass clippings and wood chips — small, plant-based items for which RTIŌĆÖs robotic technology is designed to forage. Desecration of the dead is a war crime under Article 15 of the Geneva Conventions, and is certainly not something sanctioned by DARPA, Cyclone or RTI.” EATR will be powered by the Waste Heat Engine developed by Cyclone, of Pompano Beach, Fla., which uses an “external combustion chamber” burning up fuel to heat up water in a closed loop, generating electricity. The advantages to the military are that the robot would be extremely flexible in fuel sources and could roam on its own for months, even years, without having to be refueled or serviced. Upon the EATR platform, the Pentagon could build all sorts of things ŌĆö a transport, an ambulance, a communications center, even a mobile gunship. In press materials, Robotic Technology presents EATR as an essentially benign artificial creature that fills its belly through “foraging,” despite the obvious military purpose.

FORMAL DENIAL, UNRELATED

http://news.bbc.co.uk/2/hi/middle_east/6295138.stm

British blamed for Basra badgers

British forces have denied rumours that they released a plague of ferocious badgers into the Iraqi city of Basra. Word spread among the populace that UK troops had introduced strange man-eating, bear-like beasts into the area to sow panic. But several of the creatures, caught and killed by local farmers, have been identified by experts as honey badgers. The rumours spread because the animals had appeared near the British base at Basra airport. UK military spokesman Major Mike Shearer said: “We can categorically state that we have not released man-eating badgers into the area. “We have been told these are indigenous nocturnal carnivores that don’t attack humans unless cornered.”

The director of Basra’s veterinary hospital, Mushtaq Abdul-Mahdi, has inspected several of the animals’ corpses. He told the AFP news agency: “These appeared before the fall of the regime in 1986. They are known locally as Al-Girta. “Talk that this animal was brought by the British forces is incorrect and unscientific.” But the assurances did little to convince some members of the public. One housewife, Suad Hassan, 30, claimed she had been attacked by one of the badgers as she slept. “My husband hurried to shoot it but it was as swift as a deer,” she said. “It is the size of a dog but his head is like a monkey,” she told AFP.