3D DRIFT + SHIFT

https://arxiv.org/abs/1903.07603

https://arxiv.org/abs/2206.01156

https://lamiyamowla.com/3d-dash/explorer

https://yahoo.com/trick-allows-hubble-infrared-image-of-sky

by Phil Plait / June 16, 2022

“Astronomers employed a relatively new technique to create a highly detailed infrared mosaic of a patch of sky an impressive six times larger than the full Moon. This is the largest infrared field ever observed by Hubble, and likely won’t even be surpassed by JWST. The survey is called 3D-DASH, which stands for Three Dimensional Drift And Shift.

The “3D” part comes from the survey using the Wide Field 3 imaging camera on Hubble, which gives a two-dimensional map of a galaxy, and also using a grism, a type of detector that takes a spectrum of the galaxy that yields its distance via redshift, giving the third dimension [link to paper]. The drift-and-shift part is very clever.

From this moment on you will always be able to tell the difference between a Hubble image and a JWST image:

Hubble stars have four spikes in a cross. JWST stars have six in a snowflake. Thank you for your time. pic.twitter.com/BWsv2WqCqD

— Hank Green (@hankgreen) July 12, 2022

One of Hubble’s superpowers is its high-resolution, being able to see very small details in an object. To do this, it uses its Fine Guidance Sensors to lock onto a guide star for every observation. These generate extremely precise pointing measurements, and if the telescope drifts the sensors see that and tell the three gyroscopes to compensate for it. That’s time consuming, though; it can take up to 10 minutes to lock onto a star, and that has to be done every time you move the telescope to a new spot on the sky. Worse, the rules for using Hubble only allow two such guide star searches per 90-minute orbit, so constantly finding guide stars would eat up all the time, when you need that precious time for actual observations.

3D-Drift And SHift (3D-DASH), the widest Near-Infrared Hubble Space Telescope survey: https://t.co/pOAdZ4FR9u -> https://t.co/HtpYO16sHx and https://t.co/1YbC86reS8

— Daniel Fischer @cosmos4u@scicomm.xyz (@cosmos4u) June 7, 2022

DASH throws that all out. This technique basically shrugs its shoulders and says, ‘Hey, our resolution is so incredibly good already that a little drift won’t kill us,’ and just uses one guide star acquisition per orbit. After that it just lets the ‘scope drift. By taking lots of short exposures the drift doesn’t hurt that much, and afterwards the observations can be combined in such a way to be able to retrieve a lot of the resolution back. It sacrifices a little bit of resolution for saving a lot of time and being able to look at a lot more sky. Like a lot a lot.

An approximate side-by-side comparison 3D-DASH+CANDELS versus DESI Legacy Imaging Surveys.

The strongly lensed system only appears in the infrared! https://t.co/eVO35AAI56 pic.twitter.com/ZMb1Fny62E

— John F Wu (@jwuphysics) June 3, 2022

The biggest infrared survey Hubble has done previously is the CANDELS survey that covered 0.2 square degrees, roughly the size of the full Moon on the sky, and took 900 Hubble orbits to complete. 3D-DASH is six times bigger, and only took about 200 orbits. They got about 1,200 Wide Field Camera pointings in that time, all in the near-infrared at a wavelength of about 1.6 microns, well outside what the human eye can see.

I made a tool to compare Webb's new images to Hubble! https://t.co/1JqTxabgGC#JamesWebbSpaceTelescope pic.twitter.com/2wRO8t0LXD

— John Christensen (@JohnnyC1423) July 12, 2022

There’s an online interactive explorer you can use to peruse the observations if you want to see what they look like. Pretty cool. Other surveys go deeper — meaning they used longer exposures to see fainter objects — but are smaller, what we sometimes call “pencil beam” surveys. So 3D-DASH trades going deep for going big, but even so gets down to objects so faint that the dimmest star you can see by eye is still about 100 million times brighter. I’ll note the grism observations to complement the imaging ones are being executed now, and will take some time to process.

So why do this? This particular survey was done to look for galaxies more than roughly 10 billion light years away. Due to the expansion of the Universe, a lot of the interesting stuff those galaxies do has its light redshifted into the infrared, so if you want to see those things you need to see faint objects at those wavelengths.

Also, the 3D-DASH data can be added to observations taken earlier in the near-infrared, roughly 0.8 micron wavelengths, which can add more information about what kinds of stars are in these galaxies. Surveys are important because the bigger they are, the more likely they are to capture rare things.

New HLSP: 3D-DASH (Mowla et al. 2022, incl. @iva_momcheva, @astrowhit, @gbrammer, @DokkumPieter). The widest HST/WFC3 imaging survey in the F160W filter ever, covering 1.43 square degrees and 1256 individual WFC3 pointings in the COSMOS field.https://t.co/Jn9OhKrv6p pic.twitter.com/VzxJH2F8ct

— MAST (@MAST_News) June 6, 2022

Pointing Hubble, or any ‘scope, at one galaxy or a hundred will tell you a lot, but those specific pointings won’t likely catch anything just by chance. One purpose of 3D-DASH was to hopefully find extremely massive galaxies which existed when the Universe was young. Those are extremely rare, and if you want to discover them your best bet is to cover as much sky as possible as quickly as possible.

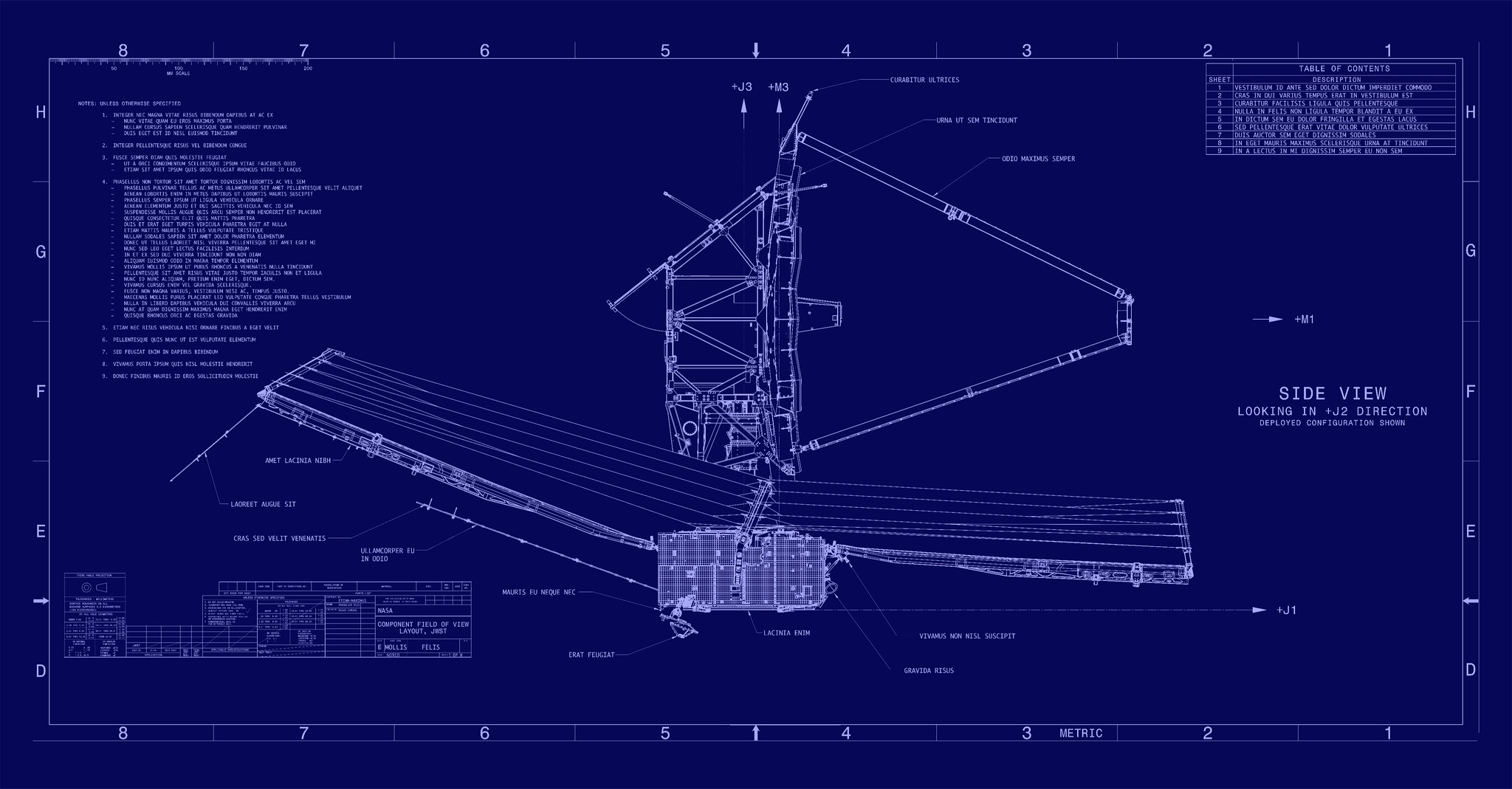

Then, if and when you spot one, you can point the telescope at it specifically to learn more. We know these galaxies grow by eating other, smaller galaxies — we see this happening today, and even our Milky Way is currently cannibalistically consuming a number of small galaxies — but the details of how this worked billions of years ago aren’t well known. When the James Webb Space Telescope (JWST) reveals its first images on 12 July, they will be the by-product of carefully crafted mirrors and scientific instruments. But all of its data-collecting prowess would be moot without the spacecraft’s communications subsystem.

The Webb’s comms aren’t flashy. Rather, the data and communication systems are designed to be incredibly, unquestionably dependable and reliable. And while some aspects of them are relatively new—it’s the first mission to use Ka-band frequencies for such high data rates so far from Earth, for example—above all else, JWST’s comms provide the foundation upon which JWST’s scientific endeavors sit.

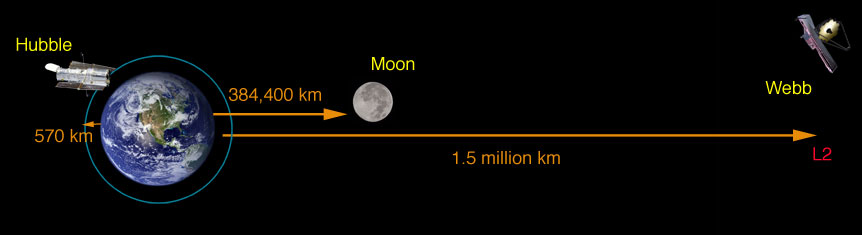

As previous articles in this series have noted, JWST is parked at Lagrange point L2. It’s a point of gravitational equilibrium located about 1.5 million kilometers beyond Earth on a straight line between the planet and the sun. It’s an ideal location for JWST to observe the universe without obstruction and with minimal orbital adjustments. Being so far away from Earth, however, means that data has farther to travel to make it back in one piece. It also means the communications subsystem needs to be reliable, because the prospect of a repair mission being sent to address a problem is, for the near term at least, highly unlikely.

Given the cost and time involved, says Michael Menzel, the mission systems engineer for JWST, “I would not encourage a rendezvous and servicing mission unless something went wildly wrong.” According to Menzel, who has worked on JWST in some capacity for over 20 years, the plan has always been to use well-understood Ka-band frequencies for the bulky transmissions of scientific data. Specifically, JWST is transmitting data back to Earth on a 25.9-gigahertz channel at up to 28 megabits per second. The Ka-band is a portion of the broader K-band (another portion, the Ku-band, was also considered).

CARINA NEBULA

Hubble / James Webb pic.twitter.com/KT2aN2Imyz

— Giovanna Liberato (@liberato_gio) July 12, 2022

3D-DASH has a good chance of helping astronomers understand this better, at a small cost in time to Hubble. And James Webb Space Telescope may not be able to match the size of 3D-DASH. It’s designed to look at specific things, not take surveys. The fields of view of its cameras are relatively small, so sweeping the ‘scope around to mosaic big fields is not likely to happen. It would take too much time, when there are thousands of other things it could be doing. So this is all pretty wonderful! I think it’s great that we can still find new ways to use Hubble, which has been observing the heavens for over 32 years now. Turns out you can teach an old dog new tricks, as long as you don’t mind it drifting off a bit.”

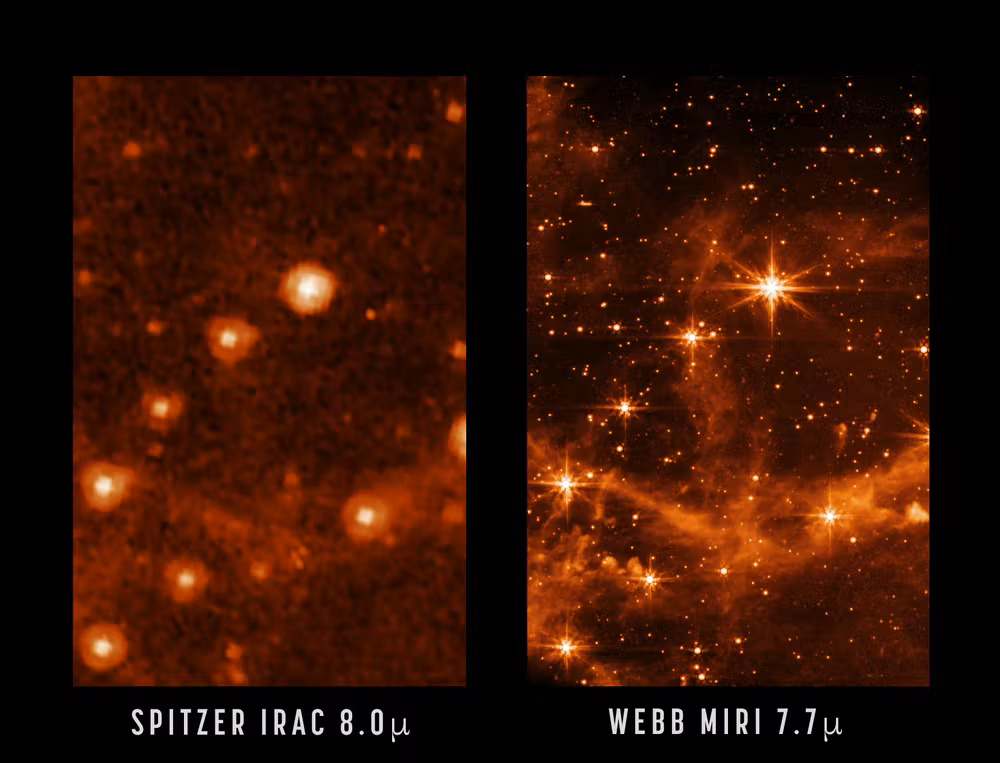

“The MIRI camera, image on the right, allows astronomers to see through dust clouds with incredible sharpness compared with previous telescopes like the Spitzer Space Telescope, which produced the image on the left.”

COSMIC DISTANCE

https://web.wwtassets.org/specials/2022/jwst-release

https://indd.adobe.com/view/19a757cb-14fe-49f2-b2f9

https://ui.adsabs.harvard.edu/abs/2006hst..prop10802R/abstract

https://phys.org/news/2019-04-hubble-universe-faster.html

Mystery of universe’s expansion rate widens with new Hubble data

by Rob Garner, Goddard Space Flight Center / April 25, 2019

“Astronomers using NASA’s Hubble Space Telescope say they have crossed an important threshold in revealing a discrepancy between the two key techniques for measuring the universe’s expansion rate. The recent study strengthens the case that new theories may be needed to explain the forces that have shaped the cosmos. A brief recap: The universe is getting bigger every second. The space between galaxies is stretching, like dough rising in the oven. But how fast is the universe expanding? As Hubble and other telescopes seek to answer this question, they have run into an intriguing difference between what scientists predict and what they observe.

Welcome to a star-formation laboratory! ⭐

In this #HubbleClassic view, galaxy NGC 4214 is ablaze with young stars and gas clouds.

The galaxy contains stars in many evolutionary stages, making it an ideal lab to research star formation & evolution: https://t.co/OLWKzel68F pic.twitter.com/3vk8g95gw2

— Hubble (@NASAHubble) July 11, 2022

Hubble measurements suggest a faster expansion rate in the modern universe than expected, based on how the universe appeared more than 13 billion years ago. These measurements of the early universe come from the European Space Agency’s Planck satellite. This discrepancy has been identified in scientific papers over the last several years, but it has been unclear whether differences in measurement techniques are to blame, or whether the difference could result from unlucky measurements. The latest Hubble data lower the possibility that the discrepancy is only a fluke to 1 in 100,000. This is a significant gain from an earlier estimate, less than a year ago, of a chance of 1 in 3,000.

Webb's mosaic is its largest image to date, covering an area of the sky 1/5 of the Moon’s diameter (as seen from Earth). It contains more than 150 million pixels and is constructed from about 1,000 image files. Compare the new image to @NASAHubble’s 2009 view, shown here! pic.twitter.com/SbulK1GIjN

— NASA Webb Telescope (@NASAWebb) July 12, 2022

These most precise Hubble measurements to date bolster the idea that new physics may be needed to explain the mismatch. “The Hubble tension between the early and late universe may be the most exciting development in cosmology in decades,” said lead researcher and Nobel laureate Adam Riess of the Space Telescope Science Institute (STScI) and Johns Hopkins University, in Baltimore, Maryland. “This mismatch has been growing and has now reached a point that is really impossible to dismiss as a fluke. This disparity could not plausibly occur just by chance.”

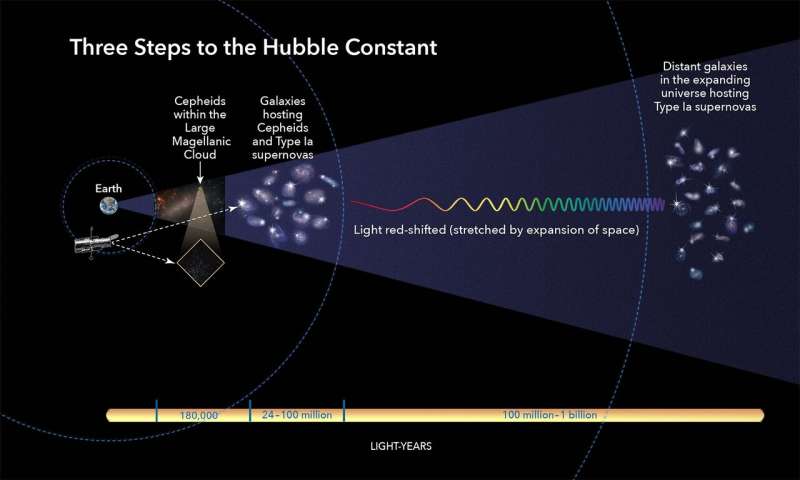

“This illustration shows the three basic steps astronomers use to calculate how fast the universe expands over time, a value called the Hubble constant.”

“This illustration shows the three basic steps astronomers use to calculate how fast the universe expands over time, a value called the Hubble constant.”

Scientists use a “cosmic distance ladder” to determine how far away things are in the universe. This method depends on making accurate measurements of distances to nearby galaxies and then moving to galaxies farther and farther away, using their stars as milepost markers. Astronomers use these values, along with other measurements of the galaxies’ light that reddens as it passes through a stretching universe, to calculate how fast the cosmos expands with time, a value known as the Hubble constant.

Riess and his SH0ES (Supernovae H0 for the Equation of State) team have been on a quest since 2005 to refine those distance measurements with Hubble and fine-tune the Hubble constant. In this new study, astronomers used Hubble to observe 70 pulsating stars called Cepheid variables in the Large Magellanic Cloud. The observations helped the astronomers “rebuild” the distance ladder by improving the comparison between those Cepheids and their more distant cousins in the galactic hosts of supernovas.

We now know what a galaxy THIRTEEN BILLION LIGHTYEARS AWAY is made of#JWST #UnfoldTheUniverse pic.twitter.com/mTdL5lEAgg

— Paul Byrne (@ThePlanetaryGuy) July 12, 2022

Riess’s team reduced the uncertainty in their Hubble constant value to 1.9% from an earlier estimate of 2.2%. As the team’s measurements have become more precise, their calculation of the Hubble constant has remained at odds with the expected value derived from observations of the early universe’s expansion. Those measurements were made by Planck, which maps the cosmic microwave background, a relic afterglow from 380,000 years after the big bang.

Why do some of the galaxies in this image appear bent? The combined mass of this galaxy cluster acts as a “gravitational lens,” bending light rays from more distant galaxies behind it, magnifying them. The light from the farthest galaxy here traveled 13.1 billion years to us. pic.twitter.com/XaZkngQqvg

— NASA Webb Telescope (@NASAWebb) July 12, 2022

The measurements have been thoroughly vetted, so astronomers cannot currently dismiss the gap between the two results as due to an error in any single measurement or method. Both values have been tested multiple ways. “This is not just two experiments disagreeing,” Riess explained. “We are measuring something fundamentally different. One is a measurement of how fast the universe is expanding today, as we see it. The other is a prediction based on the physics of the early universe and on measurements of how fast it ought to be expanding. If these values don’t agree, there becomes a very strong likelihood that we’re missing something in the cosmological model that connects the two eras.”

Are you going to give a presentation about #JWST?

In addition to the pretty pictures, today we released a beautiful slide deck about JWST science and the first images. Each slide also has extensive presenter notes and resources. https://t.co/OAg7LjWGLD pic.twitter.com/XwnJwAKSf5

— Kelly Lepo – @kellylepo@astrodon.social (@KellyLepo) July 12, 2022

Astronomers have been using Cepheid variables as cosmic yardsticks to gauge nearby intergalactic distances for more than a century. But trying to harvest a bunch of these stars was so time-consuming as to be nearly unachievable. So, the team employed a clever new method, called DASH (Drift And Shift), using Hubble as a “point-and-shoot” camera to snap quick images of the extremely bright pulsating stars, which eliminates the time-consuming need for precise pointing. “When Hubble uses precise pointing by locking onto guide stars, it can only observe one Cepheid per each 90-minute Hubble orbit around Earth.

From the sliver of a shadow of a planet passing in front of its star 1,150 lightyears away, JWST was able to find signs of water vapor and other gases.

This telescope is putting us on a fast track to finding a habitable exoplanet in our stellar neighborhood. How exciting! pic.twitter.com/ai8WAxUzVQ— Kurzgesagt (@Kurz_Gesagt) July 12, 2022

So, it would be very costly for the telescope to observe each Cepheid,” explained team member Stefano Casertano, also of STScI and Johns Hopkins. “Instead, we searched for groups of Cepheids close enough to each other that we could move between them without recalibrating the telescope pointing. These Cepheids are so bright, we only need to observe them for two seconds. This technique is allowing us to observe a dozen Cepheids for the duration of one orbit. So, we stay on gyroscope control and keep ‘DASHing’ around very fast.”

The Hubble astronomers then combined their result with another set of observations, made by the Araucaria Project, a collaboration between astronomers from institutions in Chile, the U.S., and Europe. This group made distance measurements to the Large Magellanic Cloud by observing the dimming of light as one star passes in front of its partner in eclipsing binary-star systems. The combined measurements helped the SH0ES Team refine the Cepheids’ true brightness. With this more accurate result, the team could then “tighten the bolts” of the rest of the distance ladder that extends deeper into space.

The new estimate of the Hubble constant is 74 kilometers (46 miles) per second per megaparsec. This means that for every 3.3 million light-years farther away a galaxy is from us, it appears to be moving 74 kilometers (46 miles) per second faster, as a result of the expansion of the universe. The number indicates that the universe is expanding at a 9% faster rate than the prediction of 67 kilometers (41.6 miles) per second per megaparsec, which comes from Planck’s observations of the early universe, coupled with our present understanding of the universe.

The Sombrero Galaxy

credit: Hubble

More: https://t.co/GvCgpvdpix pic.twitter.com/P3UKZQNpjw— Black Hole (@konstructivizm) July 11, 2022

One explanation for the mismatch involves an unexpected appearance of dark energy in the young universe, which is thought to now comprise 70% of the universe’s contents. Proposed by astronomers at Johns Hopkins, the theory is dubbed “early dark energy,” and suggests that the universe evolved like a three-act play. Astronomers have already hypothesized that dark energy existed during the first seconds after the big bang and pushed matter throughout space, starting the initial expansion. Dark energy may also be the reason for the universe’s accelerated expansion today. The new theory suggests that there was a third dark-energy episode not long after the big bang, which expanded the universe faster than astronomers had predicted. The existence of this “early dark energy” could account for the tension between the two Hubble constant values, Riess said.

Saturn in UV

Source: Hubble/ESA pic.twitter.com/llPSzkMJzX— Black Hole (@konstructivizm) July 3, 2022

Another idea is that the universe contains a new subatomic particle that travels close to the speed of light. Such speedy particles are collectively called “dark radiation” and include previously known particles like neutrinos, which are created in nuclear reactions and radioactive decays. Yet another attractive possibility is that dark matter (an invisible form of matter not made up of protons, neutrons, and electrons) interacts more strongly with normal matter or radiation than previously assumed.

Hubble finds Hourglass Nebula looking back

Credit: NASA/ESA pic.twitter.com/b2psutwLSq— Black Hole (@konstructivizm) July 10, 2022

But the true explanation is still a mystery. Riess doesn’t have an answer to this vexing problem, but his team will continue to use Hubble to reduce the uncertainties in the Hubble constant. Their goal is to decrease the uncertainty to 1%, which should help astronomers identify the cause of the discrepancy. The team’s results have been accepted for publication in The Astrophysical Journal.”

“An STS-125 crew member onboard the space shuttle Atlantis snaps a still photo of the Hubble Space Telescope following grapple of the giant observatory by the shuttle.”

RIGHT to REPAIR

https://bintel.com.au/narrowband-preview-tool/

https://space.com/james-webb-space-telescope-science-ready

https://iopscience.iop.org/article/10.1088/1538-3873/129/971/015004/meta

https://npr.org/hubble-telescope-still-works-great-except-when-it-doesnt

Hubble Space Telescope Still Works Great, Except When It Doesn’t

by Nell Greenfield Boyce / September 7, 2020

“Mike Brown has been using the Hubble Space Telescope pretty consistently for most of the past three decades since it launched in 1990. But recently he had an experience with Hubble that he never had before. Brown, an astronomer at the California Institute of Technology, got permission to use Hubble to do a detailed study of Jupiter’s four largest moons. These moons are called the Galilean moons after Galileo Galilei, who spotted them in 1610. They include: Ganymede, which is bigger than the planet Mercury and has a mysterious magnetic field; Io, which is the most volcanically active place in the solar system; Europa, which has more liquid water than Earth; and Callisto, which is kind of a simple ol’ cratered moon.

I just tried to observed Ganymede with the Hubble Space Telescope and the data look like empty sky. Did someone kill Ganymede before I could get to it? I am going to be very angry. I guess it also could be an HST pointing failure. But, given 2020, I expect it was destruction.

— Mike Brown does not X (@plutokiller) August 27, 2020

After Hubble was supposed to have checked out Ganymede, the data got beamed down, processed and sent to Brown by email. He eagerly opened it up. There was nothing there. He immediately thought to himself, “What did I screw up this time?” which is, as he puts it, “Pretty much what you always do as a scientist, when you see something that didn’t work.” He checked and rechecked the instructions he sent to Hubble. They were fine. Nonetheless, it turns out that Hubble had been pointing at the wrong patch of sky. Brown says this kind of error quickly happened two more times as he tried to survey Jupiter’s moons. “I don’t know if three times in a week is unusual or not, but it seems pretty unusual to me,” Brown says.

“The Hubble Space Telescope floats in space after its release from the space shuttle’s robotic arm following a servicing mission in March 2002.”

Tom Brown, head of the Hubble mission office at the Space Telescope Science Institute in Baltimore — and no relation to Mike Brown — says that Hubble does indeed just sometimes aim in the wrong direction. “It used to happen on the order of about 1% of the time,” he says. “These days, it happens more like 5% of the time.” This is an aging telescope after all. Back in 2018, when a gyroscope on Hubble failed, researchers activated one of its on-board spares — the so-called gyroscope 3. It’s been glitchy from the get-go. “It tells you the telescope is moving around even when it’s not,” Brown says.

Hubble Space Telescope video footage of Jupiter's largest moon Ganymede passing behind Jupiter! Surprisingly not CGI: it's made from 540 images taken over 2hours during April 2007. Credit: NASA, ESA, E. Karkoschka (U. Arizona), G. Bacon (STScI) pic.twitter.com/AIulIgvWJk

— Dr James O'Donoghue (@physicsJ) August 23, 2020

Telescope operators compensate for this error, but sometimes it gets out of whack before they’re able to adjust things. Disappointed researchers can submit a request to have a do-over, and they’ll generally get their data eventually — assuming they weren’t trying to see some once-in-a-million years brief cosmic event. No one really knows why gyroscope 3 is such a pain, Brown says, and conceivably, it could get so bad that they might have to turn that one off. “The biggest downside then is, instead of having the entire sky available at any one time, we would have half the sky available at any one time,” he says. Still, Hubble remains enormously popular. Hundreds of teams get to use the telescope every year.

They are the lucky ones, because there is so much demand that the majority of proposed observations have to be rejected. Hubble is being used for fields that didn’t even exist when it was launched, such as studying planets that orbit distant stars. “Hubble really is a very unique resource for humanity. And once it’s gone — I mean, a lot of people are already dreading that day — but I think when it’s gone, it’s going to hit people … hard,” Brown says. Engineers estimate that Hubble could keep going for at least another five years and probably longer. NASA has another big space telescope called the James Webb in the works, but it’s not exactly like Hubble has experienced multiple delays and huge cost overruns. It won’t launch before late next year.”

FAR OUT

https://spectrum.ieee.org/james-webb-telescope-communications

https://blogs.nasa.gov/modes-to-discovery-webbs-commissioning-activities

https://scientificamerican.com/how-taking-pictures-of-nothing-changed-astronomy

https://techcrunch.com/how-jwst-sends-images-to-earth

How Webb sends hundred-megapixel images a million miles back to Earth

by Devin Coldewey / July 12, 2022

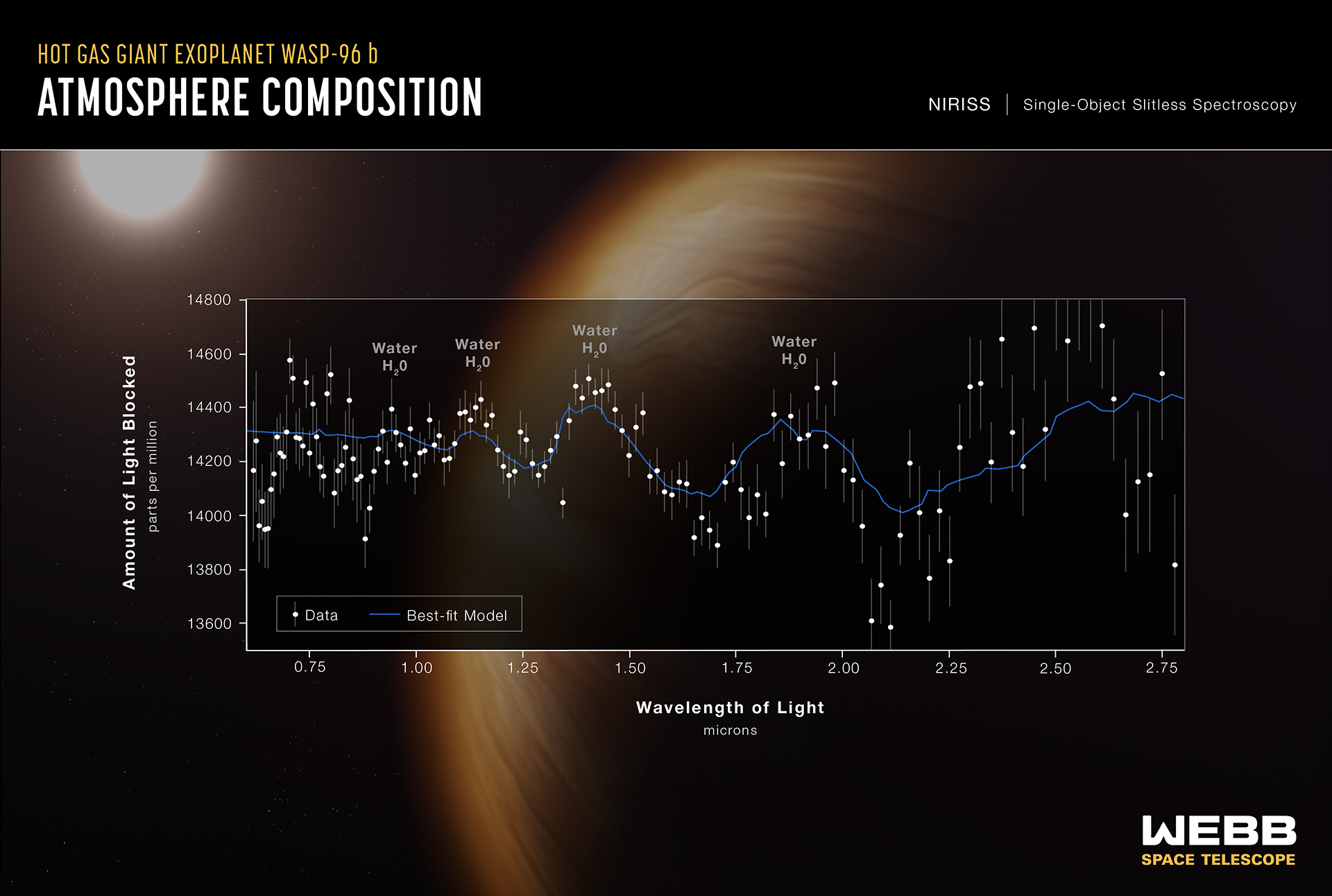

“NASA has just revealed the James Webb Space Telescope’s first set of images, from an awe-inspiring deep field of galaxies to a minute characterization of a distant exoplanet’s atmosphere. But how does a spacecraft a million miles away get dozens of gigabytes of data back to Earth? First let’s talk about the images themselves.

These aren’t just run of the mill JPEGs — and the Webb isn’t just an ordinary camera. Like any scientific instrument, the Webb captures and sends home reams of raw data from its instruments, two high-sensitivity near- and mid-infrared sensors and a host of accessories that can specialize them for spectroscopy, coronography and other tasks as needed.

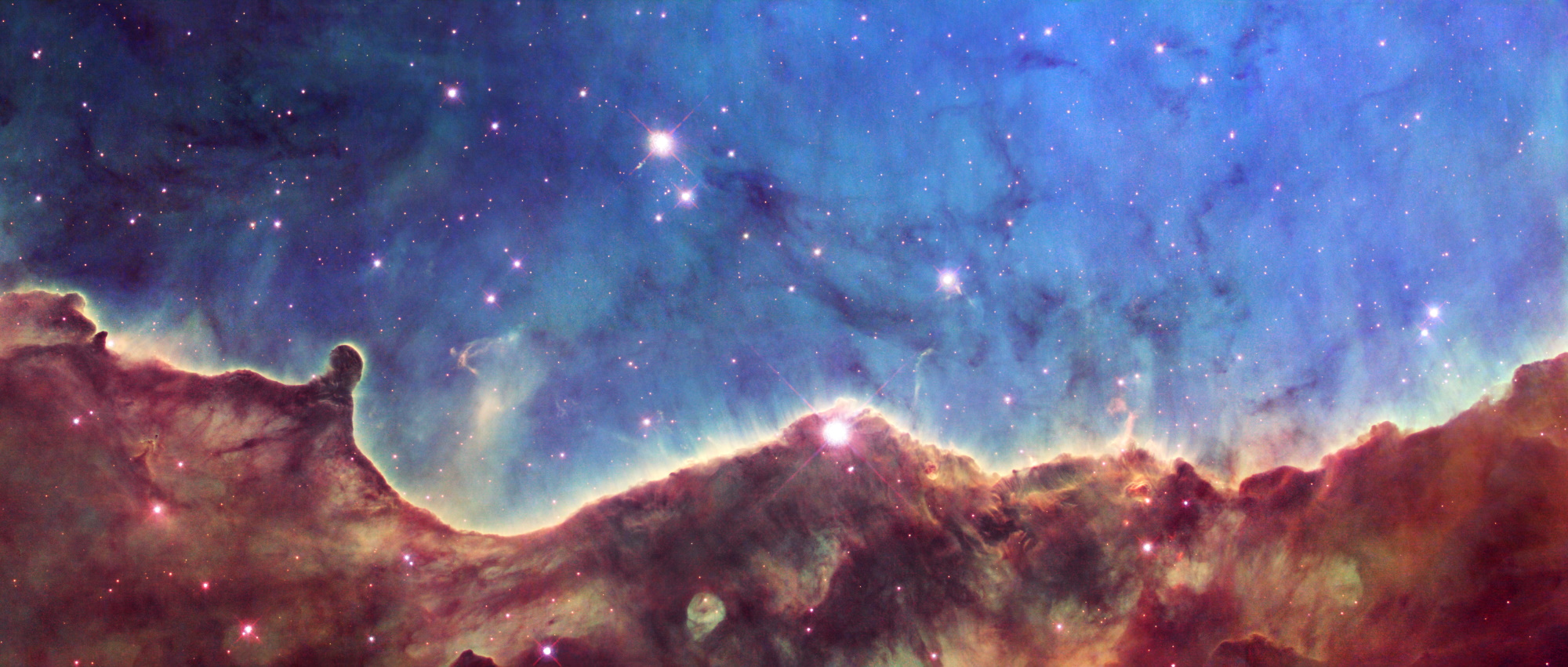

Let’s take one of the recently released first images with a direct comparison as an example. The Hubble, which is more comparable to a traditional visible-light telescope, took this image of the Carina Nebula back in 2008: “Of course this is an incredible image. But the Hubble is more comparable to a traditional visible-light telescope, and more importantly it was launched back in 1990. Technology has changed somewhat since then! Here’s the Webb’s version of that same region:

It’s plain to any viewer that the Webb version has far more detail even looking at these small versions. The gauzy texture of the nebula resolves into intricate cloud formations and wisps, and more stars and presumably galaxies are clear and visible. (Though let us note here that the Hubble image has its own charms.) Let’s just zoom in on one region to emphasize the level of detail being captured, just left and up from center:

Extraordinary, right? But this detail comes at a cost: data! The Hubble image is about 23.5 megapixels, weighing in at 32 megabytes uncompressed. The Webb image (as made available post-handling) is 123 megabytes and approximately 137 megabytes. That’s more than five times the data, but even that doesn’t tell the whole story. The Webb’s specs have it sending data back at 25 times the throughput of the Hubble — not just bigger images, but more of them … from 3,000 times further away.

“The Lagrange points are equilibrium locations where competing gravitational tugs on an object net out to zero. JWST is one of two other craft currently occupying L2.”

The Hubble is in a low-Earth orbit, about 340 miles above the surface. That means communication with it is really quite simple — your phone reliably gets signals from GPS satellites much further away, and it’s child’s play for a scientists at NASA to pass information back and forth to a satellite in such a nearby orbit. JWST, on the other hand, is at the second Lagrange point, or L2, about a million miles from Earth, directly away from the sun. That’s four times as far as the moon ever gets and a much more difficult proposition in some ways. Here’s an animation from NASA showing how that orbit looks:

Fortunately this type of communication is far from unprecedented; we have sent and received large amounts of data from much further away. And we know exactly where the Webb and Earth will be at any given time, so while it isn’t trivial, it’s really just about picking the right tools for the job and doing very careful scheduling. From the beginning, the Webb was designed to transmit over the Ka band of radio waves, in the 25.9 gigahertz range, well into the ranges used for other satellite communications. (Starlink, for instance, also uses Ka, as well as others up around that territory.) That main radio antenna is capable of sending about 28 megabits per second, which is comparable to home broadband speeds — if the signal from your router took about five seconds to travel through a million miles of vacuum to reach your laptop.

“Purely illustrative for a sense of the distances — objects are not to scale, obviously.”

That gives it about 57 gigabytes of downlink capacity per day. There is a second antenna running at the lower S-band — amazingly, the same band used for Bluetooth, Wi-Fi and garage door openers — reserved for low-bandwidth things like software updates, telemetry and health checks. If you’re interested in the specifics, IEEE Spectrum has a great article that goes into more detail on this. This isn’t just a constant stream, though, since of course the Earth spins and other events may intercede. But because they’re dealing with mostly known variables, the Webb team plans out their contact times four or five months in advance, relaying the data through the Deep Space Network.

The Webb might be capturing data and sending it the same day, but both the capture and the transmission were planned long, long before. Interestingly, the Webb only has about 68 gigabytes of storage space internally, which you would think would make people nervous if it can send 57 — but there are more than enough opportunities to offload that data so it won’t ever get that dreaded “drive full” message. But what you see in the end — even that big uncompressed 123-megabyte TIFF image — isn’t what the satellite sees. In fact, it doesn’t really even perceive color at all as we understand it.

Mad respect to the @NASA folks who took some the most significant images our our time, and made ALT text worthy of the occasion. pic.twitter.com/cMLwiN87Zw

— Kurt Opsahl @kurt@mstdn.social (@kurtopsahl) July 13, 2022

The data that comes into the sensors is in infrared, which is beyond the narrow band of colors that humans can see. We use lots of methods to see outside this band, of course, for example X-rays, which we capture and view in a way we can see by having them strike a film or digital sensor calibrated to detect them. It’s the same for the Webb. “The telescope is not really a point-and-shoot camera. So it’s not like we can just take a picture and there we have it, right? It’s a scientific instrument. So it was designed first and foremost to produce scientific results,” explained Joe DePasquale, of the Space Telescope Science Institute, in a NASA podcast.

http://nasa.gov/gravity-assist-how-we-make-webb-and-hubble-images

What it detects is not really data that humans can parse, let alone directly perceive. For one thing, the dynamic range is off the charts — that means the difference in magnitude between the darkest and lightest points. There’s basically nothing darker than the infinite blankness of space and not a lot brighter than an exploding sun. But if you have an image that includes both, taken over a period of hours, you end up with enormous deltas between dark and light in the data.

But it means that someone has to “translate” those non-visual wavelengths into visible light so we can see the JWST data as “photographs.” The person in charge of this at NASA is named Joe DePasquale. Their work is profiled here: https://t.co/XJvemG09Tj

— Trevor Paglen (@trevorpaglen) July 13, 2022

Now, our eyes and brains have pretty good dynamic range, but this blows them out of the water — and more importantly, there’s no real way to show it. “It basically looks like a black image with some white specks in it, because there’s such a huge dynamic range,” said DePasquale. “We have to do something called stretch the data, and that is to take the pixel values and sort of reposition them, basically, so that you can see all the detail that’s there.” Before you object in any way, first, be it known that this is basically how all imagery is created — a selection of the spectrum is cut out and adapted for viewing by our very capable but also limited visual system.

Elizabeth Kessler, an art historian at Stanford, points out that the Hubble Pallet is very similar to the visual language of 19th Century paintings of the American West, particularly those by Albert Bierstadt and Thomas Moran. Here book is here: https://t.co/tCtQIqPlK1

— Trevor Paglen (@trevorpaglen) July 13, 2022

Because we can’t see in infrared and there’s no equivalent of red, blue and green up in those frequencies, the image analysts have to do complicated work that combines objective use of the data to subjective understanding of perception and indeed beauty. Colors may correspond to wavelengths in a similar order, or perhaps be divided up to more logically highlight regions that “look” similar but put out wildly different radiation. “We like to in the, you know, imaging community in astrophotography like to refer to this process as ‘representative color,’ instead of what it used to be called, are still many people call ‘false color images.’ I dislike the term ‘false color,’ because it has this connotation that we’re faking it, or it’s, you know, this isn’t really what it looks like; the data is the data. We’re not going in there and applying, like painting color on to the image. We are respecting the data from beginning to end. And we’re allowing the data to show through with color.”

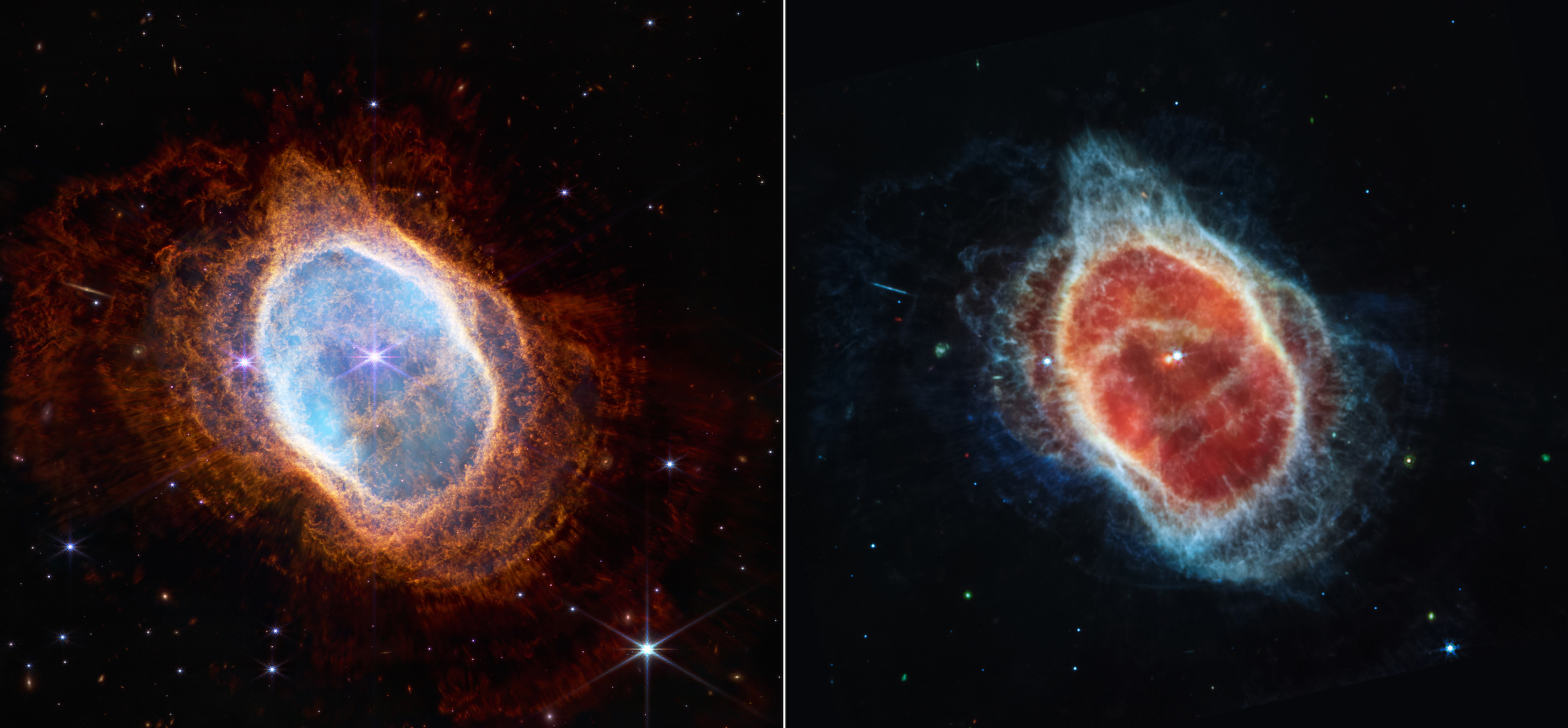

If you look at the image above, the two views of the nebula, consider that they were taken from the same angle, at more or less the same time, but using different instruments that capture different segments of the IR spectrum. Though ultimately both must be shown in RGB, the different objects and features found by inspecting higher wavelengths can be made visible by this kind of creative yet scientifically rigorous color-assignment method. And of course when the data is more useful as data than as a visual representation, there are even more abstract ways of looking at it.

An image of a faraway exoplanet may show nothing but a dot, but a spectrogram shows revealing details of its atmosphere, as you can see in this example immediately above. Collecting, transmitting, receiving, analyzing and presenting the data of something like the Webb is a complex task but one that hundreds of researchers are dedicating themselves to cheerfully and excitedly now that it is up and running. Anticipate even more creative and fascinating ways of displaying this information — with JWST just starting its mission a million miles out, we have lots to look forward to.”

Here are some highlights from the countless wonders @NASAHubble has shown us since it launched in 1990.https://t.co/7HP1dVSepD

— NOVA | PBS (@novapbs) July 14, 2022

PREVIOUSLY

HARVESTING INFRARED

https://spectrevision.net/2014/03/07/harvesting-infrared/

INTERSTELLAR RADIO

https://spectrevision.net/2013/05/31/interstellar-radio/

LOST GOLF BALLS on the MOON

https://spectrevision.net/2022/05/12/farside-dub/