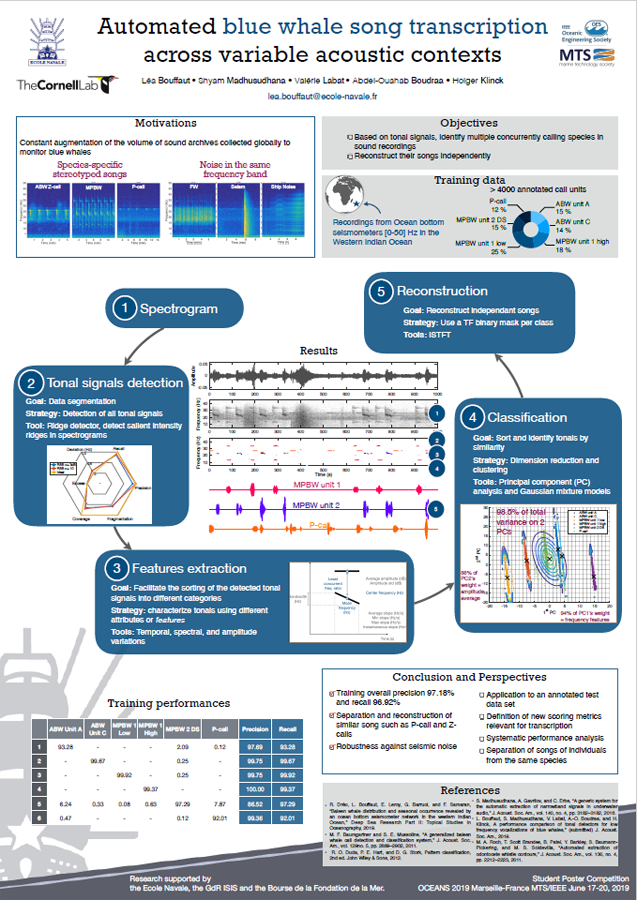

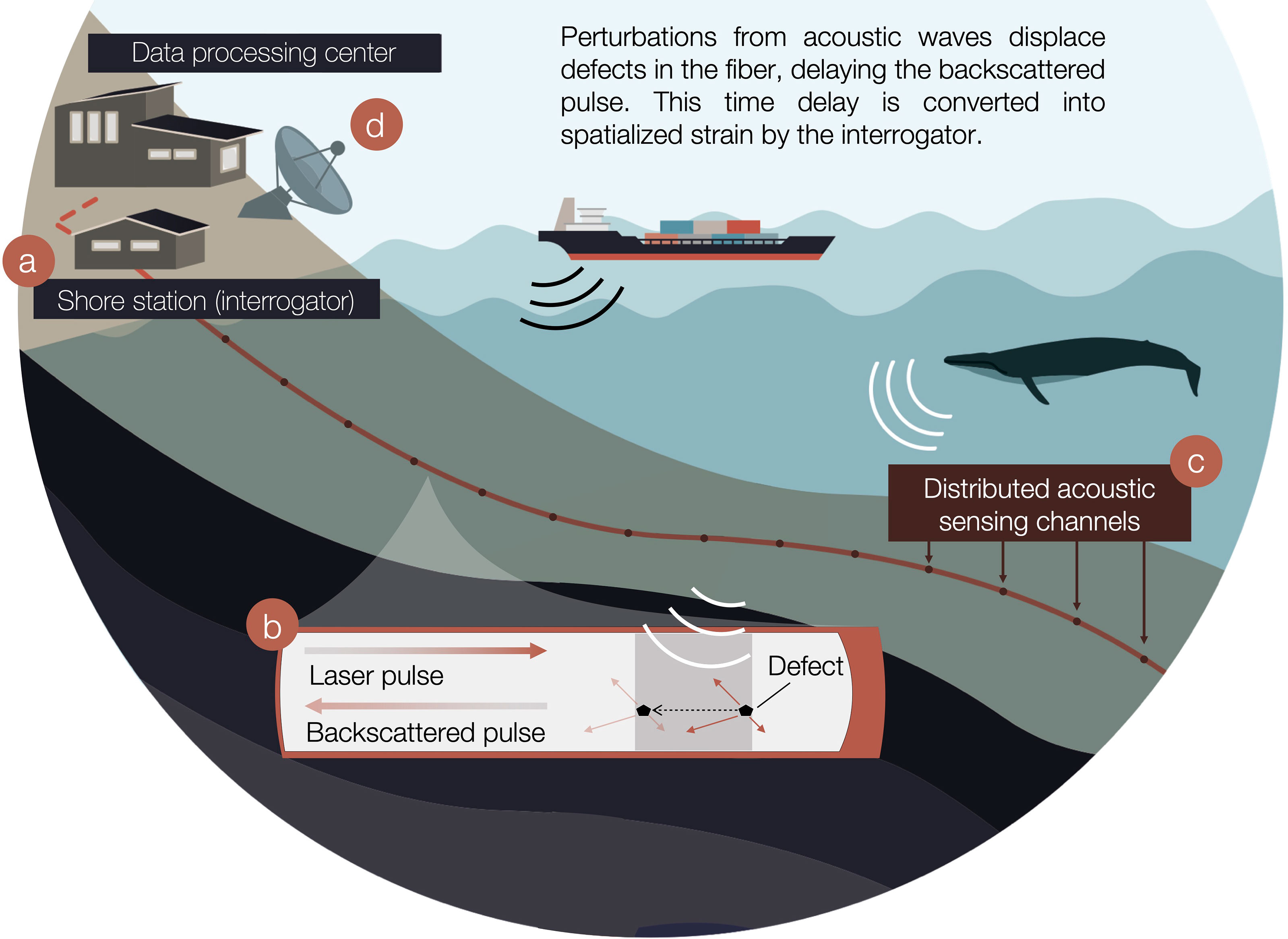

“Figure 1: Distributed acoustic sensing (DAS) for baleen whale monitoring. (A) On shore, an interrogator is connected to one end of an existing fiber optic cable to repurpose it into DAS. (B) It interrogates the fiber by sending laser pulses that are backscattered by anomalies while simultaneously, these defects are shifted under the effect of incoming acoustic waves. (C) The interrogator calculates time delays of the backscattered response at regularly spaced intervals along the fiber, named channels. Time delays are averaged over the gauge length and converted into longitudinal strain waveforms analogous to acoustic pressure. (D) DAS two-dimensional data is streamed in near-real-time to a remote data processing center.”

DISTRIBUTED ACOUSTIC SENSING

https://submarinecablemap.com

https://iris.edu/hq/initiatives/das_rcn

https://leabouffaut.home.blog/publications

https://projectceti.org/scientific-roadmap

https://npr.org/ai-program-detecting-whales

https://nytimes.com/animal-translators-whales

https://nytimes.com/humpback-whale-songs-cultural-evolution

https://frontiersin.org/articles/10.3389/fmars.2022.901348/full

https://phys.org/news/eavesdropping-whales-high-arctic

Eavesdropping on whales in the high Arctic

by Norwegian University of Science and Technology / July 5, 2022

“Whales are huge, but they live in an even larger environment—the world’s oceans. Researchers use a range of tools to study their whereabouts, including satellite tracking, aerial surveys, sightings and deploying individual hydrophones to listen for their calls. But now, for the first time ever, researchers have succeeded in passively listening to whales—essentially, eavesdropping on them—using existing underwater fiber optic cables.

https://twitter.com/WallStreetSilv/status/1554240565578956800

The technique, called distributed acoustic sensing, or DAS, uses an instrument called an interrogator to tap into a fiber optic system, turning unused, extra fibers in the cable into a long virtual array of hydrophones. The research was conducted in the Svalbard archipelago, in an area called Isfjorden, where baleen whales, such as blue whales, are known to forage during the summer. “I think this can change the field of marine bioacoustics,” said Léa Bouffaut, the first author of a paper just published in Frontiers in Marine Sciences. Bouffaut was a postdoc at NTNU, the Norwegian University of Science and Technology, when she worked on this research and is now at the K. Lisa Yang Center for Conservation Bioacoustics at Cornell University, where she continues expanding this work.

Bouffaut said the beauty of the system is that it could allow researchers to take advantage of an existing, worldwide network. This system could become like satellites in the ocean. “Deploying hydrophones is extremely expensive. But fiber optic cables are all around the world, and are accessible,” she said. “This could be much like how satellite imagery coverage of the Earth has allowed scientists from many different fields to do many different types of studies of the Earth. To me, this system could become like satellites in the ocean.” Bouffaut said that other types of whale monitoring that rely on sound often only provide single or a few points of location information from a hydrophone. Point locations provide limited coverage of an area, and of course aren’t evenly spread across all oceans, which can make it challenging for researchers to study migratory routes, for example.

In contrast, DAS not only allows researchers to detect whale vocalizations, they can use the fiber network to locate where the whales are in both space and time, with an unprecedented spatial resolution, she said. “With this system, which is what we can basically call a hydrophone array, we have the chance to cover a much bigger area for monitoring. And because we receive the sound at multiple angles, we can even say where the animal was—the position of the animal. And that’s a huge advantage. And if we take it even further, which still requires some extra work, this could happen in real time, which would really be a game changer for acoustic monitoring of whales,” said her colleague Hannah Joy Kriesell, one of the paper’s co-authors.

“Audio associated to the series of stereotyped North Atlantic blue whale calls and down-sweeps represented on Figure 2D at 76.6 km, sped up 3.5 times.”

“Audio associated to the series of non-stereotyped arched sounds and down-sweeps represented on Figure 2D 25.1 km, sped up 3.5 times.”

“Audio associated to the series of down-sweeps represented on Figure 2D at 49.0 km, sped up 3.5 times.”

“High-quality beamformed audio of the blue whale stereotyped and D-calls represented on Figures 5C, G, K, sped up 3.5 times.”

The technology also allows researchers to hear other sounds carried through the water, from large tropical storms to earthquakes to ships passing by, said Martin Landrø, an NTNU geophysicist who was a co-author of the paper. Landrø is also head of the Centre for Geophysical Forecasting, a Centre for Research-based Innovation funded by the Research Council of Norway. We detected at least four or five different large storms that occurred and we could go back to the meteorological data and identify them by name. “If anything is moving close to or making an acoustic noise close to that fiber, which is buried in the seabed, we can measure that,” he said. “So what we saw was a lot of ship traffic, of course, a lot of earthquakes, and we could also detect distant storms.

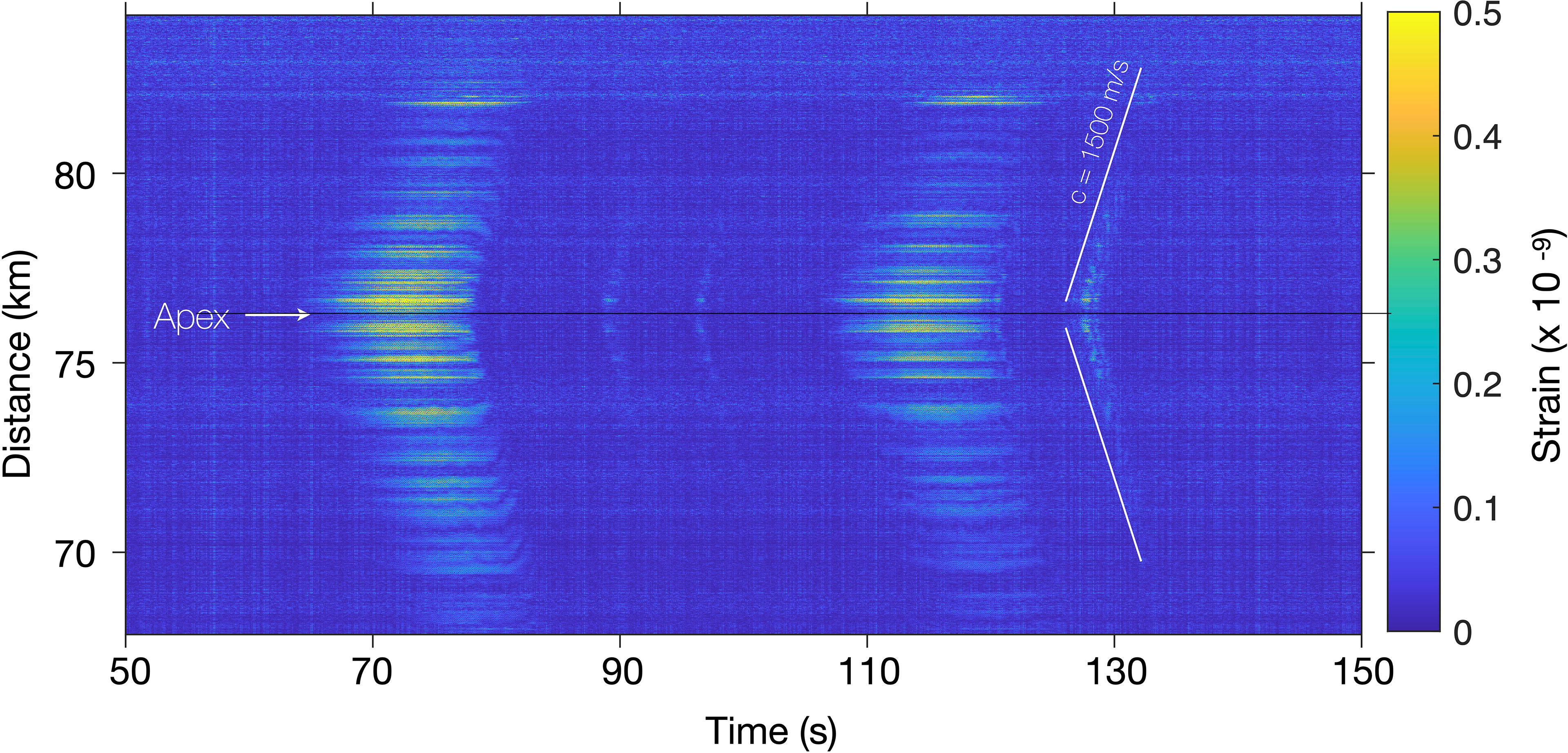

“Figure 3: Spatio-temporal representation of blue whale vocalizations recorded on the Svalbard DAS array. The t-x plot displays the temporal variations of the strain between 68 and 84 km of FO cable when receiving two successive stereotyped blue whale calls (at 70 and 110 s), intertwined with down-sweeps (D-calls; at ≃90 , 95 and 127 s) recorded on July 10, 2020 at 035530UTC. The channel at 76.6 km was used to produce for the spectrogram in Figure 2D.

And last but not least, whales. We detected at least 830 whale vocalizations altogether.”Landrø said that detecting distant storms was possible because of the low frequency seismic signals that are generated by the waves from big storms. This method of detecting large storms from low-frequency waves at great distances was established by the oceanographer Walter Munk in 1963, when he measured waves from Antarctic storms on the Pacific Island of Samoa, Landrø said. “We were able to see storms that occurred in the South Atlantic 13,000 kilometers away on the low frequency part of the data, and we could determine the distance to the storm,” he said. “We detected at least four or five different large storms that occurred and we could go back to the meteorological data and identify them by name.”

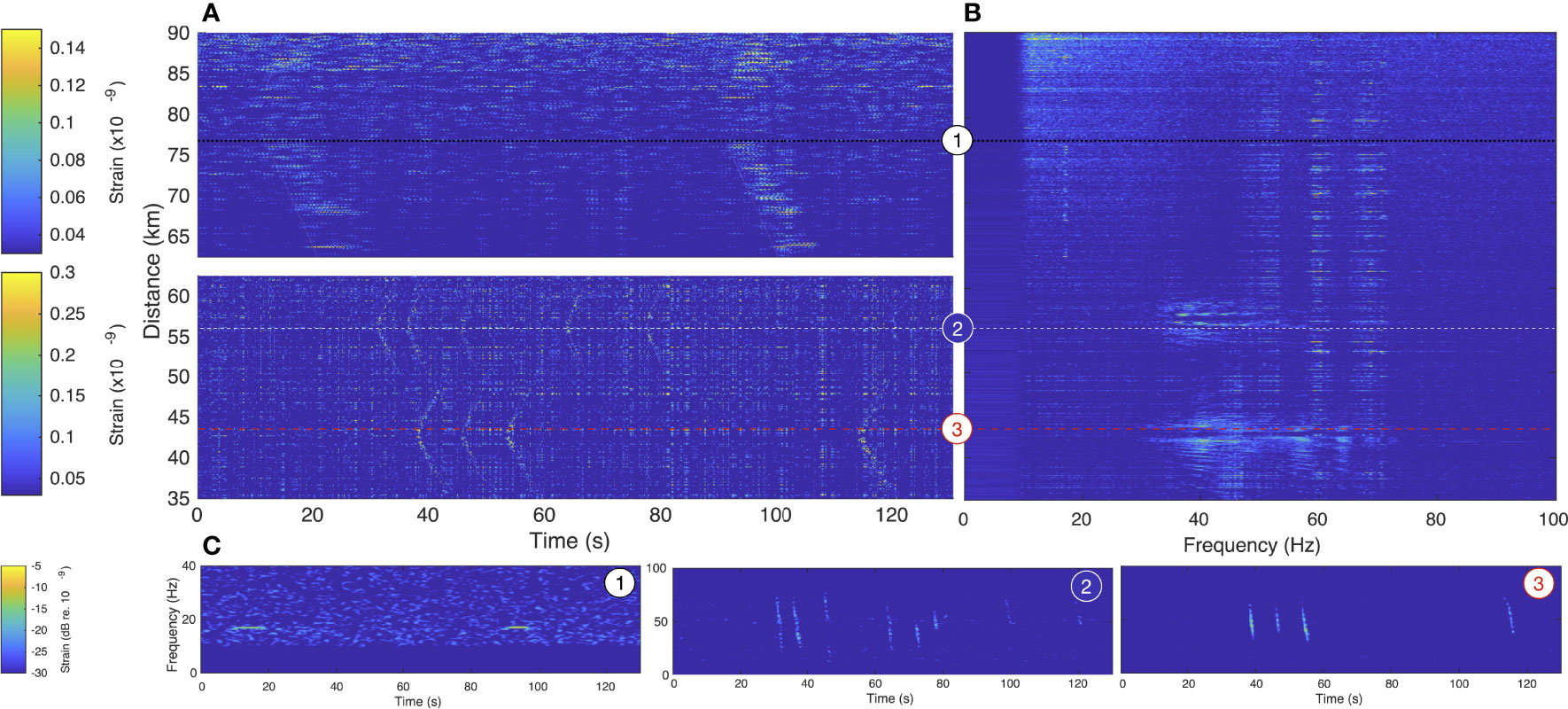

“Figure 4: Vocalizing baleen whales recorded simultaneously at three different locations along the Svalbard DAS array. (A) Spatio-temporal (t-x), (B) Spatio-spectral (f-x) and (C) Spectro-temporal (spectrogram) representations of a 130 s-long portion of recording from June 26, 2020 at 052440UTC, between 35-90 km of fiber optic cable. Each whale position (around 76.5 km, 57.5 km and 44.2 km) is given a number 1-3, to facilitate panel associations.”

The researchers worked with Sikt, the Norwegian Agency for Shared Services in Education and Research, which provided access to 250 km of fiber optic cable in Svalbard, buried on the seabed between the archipelago’s main town, Longyearbyen, and Ny-Ålesund, a research settlement on a peninsula to the northwest. The cable goes from a sheltered fjord, called Isfjorden, and out into the open ocean, where 120 km of the cable was used as a hydrophonic array. The DAS sensing and whale observation experiment shows a completely new use of this kind of fiber optic infrastructure, resulting in excellent, unique science. The research group also included Alcatel Submarine Networks Norway, which provided the interrogators—the instruments that allowed the group to tap into the Sikt fiber optic cable. Two researchers traveled to Longyearbyen in June 2020, at the beginning of the COVID-19 pandemic, and were able to use the interrogator for 40 days, listening along the length of the 120 km long cable.

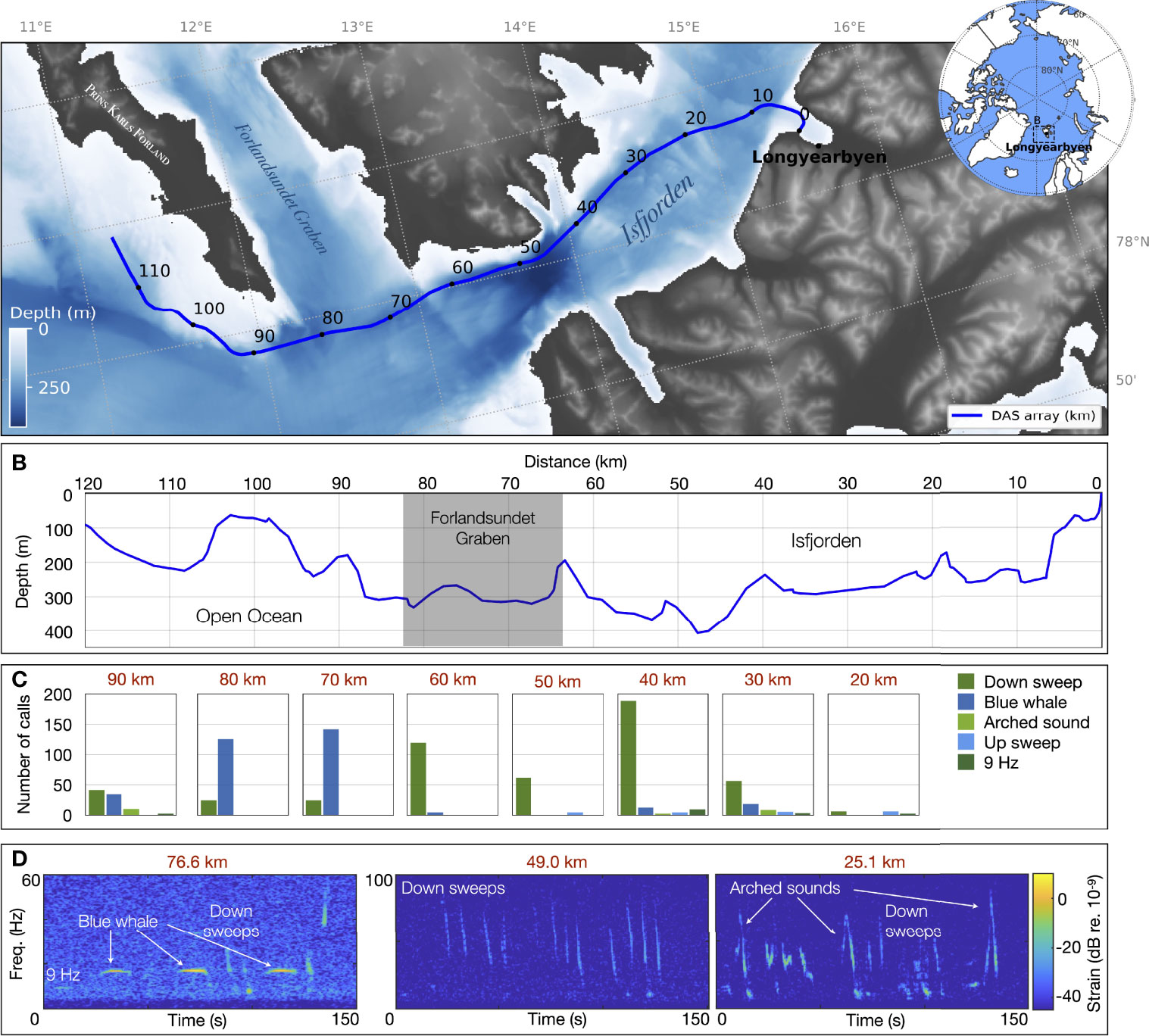

“Baleen whale vocalizations detected over the 120 km of the Svalbard underwater distributed acoustic sensing (DAS) array. (A) The DAS crosses Isfjorden out to the open sea, bypassing the South of Prins Karls Forland in Svalbard, Norway. The interrogator end of the fiber is located on shore in Longyearbyen, recording data with a spatial sampling of 4.08 m at a sampling frequency of fs = 645.16 Hz between June 23 and August 5, 2020. (B) The DAS fiber optic cable is trenched 1-2 m deep in the seafloor and follows bathymetric variations with an average depth of ≃216 m. Number of calls (C) and examples (D) representative of the diversity of baleen whale vocalizations detected along the DAS during the entire recording period. Associated sounds are available in supplementary material Audio 1–3.”

“The fiber cable between Longyearbyen and Ny-Ålesund, which was put in production in 2015 after five years of planning and prework and mainly funded by our ministry, was intended to serve the research community and the geodetic station in Ny Ålesund with high and resilient communication capacity,” said Olaf Schjelderup, head of Sikt’s national R&E network and another paper co-author. “The DAS sensing and whale observation experiment shows a completely new use of this kind of fiber optic infrastructure, resulting in excellent, unique science.” Schjelderup noted that another advantage of the experiment was the high-capacity data communication capacity provided by the Norwegian R&E Network, (namedmUninett), which allowed near real-time streaming of the raw data from the DAS sensing unit in Longyearbyen all the way to Trondheim. “The researchers could, from Trondheim, almost instantly, start studying the signal recordings from the sea outside Svalbard. This is a very good example of a paradigm shift in distributed data collection,” he said. “What has been done at Svalbard here paves the way for rolling out more of these kinds of fiber optic sensors as permanent infrastructure—collecting and processing data from different geographies and locations in near real time—both for research and different operational purposes.”

“Animation showing the successive 2-s f-x plots used to construct the integrated representation of Figure 4B.”

Bouffaut and Kriesell, a researcher at NTNU’s Department of Electronic Systems, worked on analyzing the 40 days of round-the-clock data from the experiment. In total they had to analyze 7 terabytes per day, or roughly 250 terabytes of data for the entire period. They started by looking at signals from locations on the fiber that were 10 km apart, which “made it manageable,” Bouffaut said. The challenge, in addition to the amount of data involved, was that “we’re looking for signals without knowing exactly what to expect. This is new technology and a new type of data that no one has looked at for finding whales,” Bouffaut said. The work was painstaking, but “it was very, super exciting, especially when we started seeing whale signals,” Bouffaut said. “But I get this question a lot, even from bioacousticians: how do you know this is a whale? And I say, ‘how do you know it’s a whale when you’ve recorded from a hydrophone? We recognized the frequency, the pattern, the repetition, and we listened to it.'” Bouffaut added that now that the researchers know the data better, they could train machine learning models to simplify and automatize the data analysis process.

https://soundsawesomehome.files.wordpress.com/2020/01/bouffaut2019_phd.pdf

Bouffaut and Kriesell identified what are called stereotyped calls for North Atlantic blue whales outside of Isfjorden. These kinds of calls are associated with male vocalizations. They also saw what are called D-calls, which are vocalizations in which the sound sweeps down, and can be made by males, females and calves. They detected these calls inside the sheltered waters of the fjord. Previous researchers have linked D-calls with foraging or social contexts, the researchers said. Bouffaut said the value of this kind of system was especially clear in the Arctic, where warming from climate change is happening two to three times more quickly than average. “The Arctic is changing very fast. And both animal and human use of the area is changing as fast as the ice melts,” Bouffaut said. Whales such as blue whales aren’t year-round users of the region yet, but as the ice melts, this could change, she noted. At the same time, the disappearing ice cover opens the Arctic to increased shipping, fishing and other activities, such as tourism. “So if whales are changing their uses of this area, and maybe using this area for more than for foraging or for activities where they’re very vulnerable, then having this type of technology can help us monitor these changes,” Bouffaut said.

https://soundsawesomehome.files.wordpress.com/2020/01/presentation.pdf

It is possible to see whales when they come to the surface to breathe, but sound is acknowledged as the best way to study whales because they’re otherwise very elusive. “So by studying their sound production, their calls and their vocalizations, we can learn a lot about them. We can learn where they are during different seasons, and how and where they migrate to. So we get a lot of information by eavesdropping on them,” Kriesell said.

And if DAS can be set up so that the information can be analyzed in real time, the information could be relayed to ships traveling in waters where whales are feeding or socializing, and inform stakeholders for direct conservation action, Bouffaut said. “We could potentially reduce the risk of ship strikes with whales. That would be a very big deal,” Kriesell added. “Arctic ice is melting, and ship traffic has drastically increased in the Arctic. And that’s a problem for the animals. So if we have a means to inform ships about the location of whales in real time, we could stop or at least reduce the risk for ship strikes.”

PREVIOUSLY

SUBMARINE CABLE MAPS

https://spectrevision.net/2019/02/21/submarine-cable-maps/

CONVERSATIONAL DOLPHIN for BEGINNERS (cont.)

https://spectrevision.net/2015/12/10/conversational-dolphin-for-beginners-cont/

WHALESONG WAVELETS

https://spectrevision.net/2006/08/17/whalesong-wavelets/