“The Carna botnet was used to create a map of all public facing computers in the world.”

“The Carna botnet was used to create a map of all public facing computers in the world.”

FOLK MODELS of HOME COMPUTER SECURITY

http://www.schneier.com/blog/archives/2011/03/folk_models_in.html

http://www.rickwash.com/papers/rwash-dissertation-final.pdf

http://www.rickwash.com/papers/rwash-homesec-soups10-final.pdf

by Rick Wash / at SOUPS (Symposium on Usable Privacy and Security)

Home computer systems are insecure because they are administered by untrained users. The rise of botnets has ampli’¼üed this problem; attackers compromise these computers, aggregate them, and use the resulting network to attack third parties. Despite a large security industry that provides software and advice, home computer users remain vulnerable. I identify eight ŌĆśfolk modelsŌĆÖ of security threats that are used by home computer users to decide what security software to use, and which expert security advice to follow: four conceptualizations of ŌĆśvirusesŌĆÖ and other malware, and four conceptualizations of ŌĆśhackersŌĆÖ that break into computers. I illustrate how these models are used to justify ignoring expert security advice. Finally, I describe one reason why botnets are so di’¼ācult to eliminate: they cleverly take advantage of gaps in these models so that many home computer users do not take steps to protect against them.

1. INTRODUCTION

Home users are installing paid and free home security software at a rapidly increasing rate.{1} These systems include anti-virus software, anti-spyware software, personal ’¼ürewall software, personal intrusion detection / prevention systems, computer login / password / ’¼üngerprint systems, and intrusion recovery software. Nonetheless, security intrusions and the costs they impose on other network users are also increasing. One possibility is that home users are starting┬Āto become well-informed about security risks, and that soon enough of them will protect their systems that the problem will resolve itself. However, given the ŌĆ£arms raceŌĆØ history in most other areas of networked security (with intruders becoming increasingly sophisticated and numerous over time), it is likely that the lack of user sophistication and non-compliance with recommended security system usage policies will continue to limit home computer security e’¼Ćectiveness. To design better security technologies, it helps to understand how users make security decisions, and to characterize the security problems that result from these decisions. To this end, I have conducted a qualitative study to understand usersŌĆÖ mental models [18, 11] of attackers and security technologies. Mental models describe how a user thinks about a problem; it is the model in the personŌĆÖs mind of how things work. People use these models to make decisions about the e’¼Ćects of various actions [17]. In particular, I investigate the existence of folk models for home computer users. Folk models are mental models that are not necessarily accurate in the real world, thus leading to erroneous decision making, but are shared among similar members of a culture[11]. It is well-known that in technological contexts users often operate with incorrect folk models [1]. To understand the rationale for home usersŌĆÖ behavior, it is important to understand the decision model that people use. If technology is designed on the assumption that users have correct mental models of security threats and security┬Āsystems, it will not induce the desired behavior when they are in fact making choices according to a di’¼Ćerent model. As an example, Kempton [19] studied folk models of thermostat technology in an attempt to understand the wasted energy that stems from poor choices in home heating. He found that his respondents possessed one of two mental models for how a thermostat works. Both models can lead to poor decisions, and both models can lead to correct decisions that the other model gets wrong. Kempton concludes that ŌĆ£Technical experts will evaluate folk theory from this perspective [correctness] ŌĆō not by asking whether it ful’¼ülls the needs of the folk. But it is the latter criterion […] on which sound public policy must be based.ŌĆØ The same argument holds for technology design: whether the folk models are correct or not, technology should be designed to work┬Āwell with the folk models actually employed by users.{2} For home computer security, I study two related research questions: 1) Potential threats : How do home computer users conceptualize the information security threats that they face? 2) Security responses : How do home computer users apply their mental models of security threats to make security-relevant decisions? Despite my focus on ŌĆ£home computer users,ŌĆØ many of these problems extend beyond the home; most of my analysis and understanding in this paper is likely to generalize to a whole class of users who are unsophisticated in their security decisions. This includes many university computers, computers in small business that lack IT support, and personal computers used for business purposes.

{1} Despite a worldwide recession, the computer security industry grew 18.6% in 2008, totaling over $13 billion according to a recent Gartner report [9]

{2} It may be that users can be re-educated to use more correct mental models, but generally it more di’¼ācult to re-educate

http://www.csoonline.com/documents/flash/ammap/ammap.swf

1.1 Understanding Security

Managing the security of a computer system is very dif’¼ücult. Ross AndersonŌĆÖs [2] study of Automated Teller Machine (ATM) fraud found that the majority of the fraud committed using these machines was not due to technical ’¼éaws, but to errors in deployment and management failures. These problems illustrate the di’¼āculty that even professionals face in producing e’¼Ćective security. The vast ma jority of home computers are administered by people who have little security knowledge or training. Existing research has investigated how non-expert users deal with security and network administration in a home environment. Dourish et al. [12] conducted a related study, inquiring not into mental models but how corporate knowledge workers handled security issues. Gross and Rossum [15] also studied what security knowledge end users posses in the context of large organizations. And Grinter et al. [14] interviewed home network users about their network administration practices. Combining the results from these papers, it appears that many users exert much e’¼Ćort to avoid security decisions. All three papers report that users often ’¼ünd ways to delegate the responsibility for security to some external entity; this entity could be technological (like a ’¼ürewall), social (another person or IT sta’¼Ć ), or institutional (like a bank). Users┬Ādo this because they feel like they donŌĆÖt have the skills to maintain proper security. However, despite this delegation of responsibility, many users still make numerous security-related decisions on a regular basis. These papers do not explain how those decisions get made; rather, they focus mostly on the anxiety these decisions create.┬ĀI add structure to these observations by describing how folk models enable home computer users to make security decisions they cannot delegate. I also focus on di’¼Ćerences between people, and characterize di’¼Ćerent methods of dealing with security issues rather than trying to ’¼ünd general patterns. The folk models I describe may explain di’¼Ćerences observed between users in these studies. Camp [6] proposed using mental models as a framework for communicating complex security risks to the general populace. She did not study how people currently think about security, but proposed ’¼üve possible models that may be useful. These models take the form of analogies or metaphors with other similar situations: physical security, medical risks, crime, warfare, and markets. Asghapour et al. [3] built on this by conducting a card sorting experiment that matches┬Āthese analogies with the mental models of uses. They found that experts and non-experts show sharp di’¼Ćerences in which analogy their mental model is closest to. Camp et al. began by assuming a small set of analogies that they believe function as mental models. Rather than pre-de’¼üning the range of posssible models, I treat these mental models as a legitimate area for inductive investigation, and endeavor to uncover usersŌĆÖ mental models in whatever form they take. This prior work con’¼ürms that the concept of mental models may be useful for home computer security, but made assumptions which may or may not be appropriate. I ’¼üll in the gap by inductively developing an understanding of just what mental models people actually possess. Also, given the vulnerability of home computers and this ’¼ünding that experts and non-experts di’¼Ćer sharply [3], I focus solely on non-expert home computer users. Herley [16] argues that non-expert users reject security advice because it is rational do to so. He believes that security experts provide advice that ignores the costs of the usersŌĆÖ time and e’¼Ćort, and therefore overestimates the net value of security. I agree, though I dig deeper into understanding how users actually make these security / e’¼Ćort tradeo’¼Ćs.

1.2 Botnets and Home Computer Security

In the past, computers were targeted by hackers approximately in proportion to the amount of value stored on them or accessible from them. Computers that stored valuable information, such as bank computers, were a common target, while home computers were fairly innocuous. Recently, attackers have used a technique known as a ŌĆśbotnet,ŌĆÖ where they hack into a number of computers and install special ŌĆścontrolŌĆÖ software on those computers. The hacker can give a master control computer a single command, and it will be carried out by all of the compromised computers (called zombies) it is connected to [4, 5]. This technology enables crimes that require large numbers of computers, such as spam, click fraud, and distributed denial of service [26]. Observed botnets range in size from a couple hundred zombies to 50,000 or more zombies. As John Marko’¼Ć of the New York Times observes, botnets are not technologically novel; rather, ŌĆ£what is new is the vastly escalating scale of the problemŌĆØ [21]. Since any computer with an Internet connection will be an e’¼Ćective zombie, hackers have logically turned to attacking the most vulnerable population: home computers. Home computer users are usually untrained and have few technical skills. While some software has improved the average level of security of this class of computers, home computers still represent the largest population of vulnerable computers. When compromised, these computers are often used to┬Ācommit crimes against third parties. The vulnerability of home computers is a security problem for many companies and individuals who are the victims of these crimes, even if their own computers are secure [7].

1.3 Methods

I conducted a qualitative inquiry into how home computer users understand and think about potential threats. To develop depth in my exploration of the folk models of security, I used an iterative methodology as is common in qualitative research [24]. I conducted multiple rounds of interviews punctuated with periods of analysis and tentative conclusions. The ’¼ürst round of 23 semi-structured interviews was conducted in Summer 2007. Preliminary analysis proceeded throughout the academic year, and a second round of 10 interviews was conducted in Summer 2008, for a total of 33 respondents. This second round was more focused, and speci’¼ücally searched for negative cases of earlier results [24]. Interviews averaged 45 minutes each; they were audio recorded and transcribed for analysis. Respondents were chosen from a snowball sample [20] of home computer users evenly divided between three mid-western U.S. cities. I began with a few home computer users that I knew in these cities. I asked them to refer me to others in the area who might be information-rich informants. I screened these potential respondents to exclude people who had expertise or training in computers or computer security. From those not excluded, I purposefully selected respondents for maximum variation [20]: I chose respondents from a wide variety of backgrounds, ages, and socio-economic classes. Ages ranged from undergraduate (19 years old) up through retired (over 70). Socio-economic status was not explicitly measured, but ranged from recently graduated artist living in a small e’¼āciency up to a successful executive who owns a large house overlooking the main river through town. Selecting for maximal variation allows me to document diverse variations in folk models and identify important common patterns [20]. After interviewing the chosen respondents, I grew by potential interview pool by asking them to refer me to more people with home computers who might provide useful information. This snowballing through recommendations ensured that the contacted respondents would be information-rich [20] and cooperative. These new potential respondents were also screened, selected, and interviewed. The method does not generate a sample that is representative of the population of home computer users. However, I donŌĆÖt believe that the sample is a particularly special or unusual group; it is likely that there are other people like them in the larger population.

I developed an (IRB approved) face-to-face semi-structured interview protocol that pushes sub jects to describe and use their mental models, based on formal methods presented by DŌĆÖAndrade [11]. I speci’¼ücally probed for past instances where the respondents would have had to use their mental model to make decisions, such as past instances of security problems, or e’¼Ćorts undertaken to protect their computers. By asking about instances where the model was applied to┬Āmake decisions, I enabled the respondents to uncover beliefs that they might not have been consciously aware of. This also ensures that the respondents believe their model enough to base choices on it. The ma jority of each interview was spent on follow-up questions, probing deeper into the responses of the sub ject. This method allows me to describe speci’¼üc, detailed mental models that my participants use to┬Āmake security decisions, and to be con’¼üdent that these are models that the participants actually believe. My focus in the ’¼ürst round was broad and exploratory. I asked about any security-related problems the respondent had faced or was worried about; I also speci’¼ücally asked about viruses, hackers, data loss, and data exposure (identity theft). I probed to discover what countermeasures the respondents used to mitigate these risks. Since this was a semi-structured interview, I followed up on many responses by probing for more information. After preliminary analysis of this data, I drew some tentative conclusions and listed points that needed clari’¼ücation. To better elucidate these models and to look for negative cases, I conducted 10 second-round interviews using a new (IRB approved) interview protocol. In this round, I focused more on three speci’¼üc threats that sub jects face: viruses, hackers, and identity theft. For this second round, I also used an additional interviewing technique: hypothetical scenarios. This technique was developed to help focus the respondents and elicit additional information not present in the ’¼ürst round of interviews. I presented the respondents with three hypothetical scenarios and asked the sub jects for their reaction. The three scenarios correspond to each of the three main themes for the second round: ’¼ünding out you have a virus, ’¼ünding out a hacker has conpromised your computer, and being informed that you are a victim of identity theft. For each scenario, after the initial description and respondent reaction, I added an additional piece of information that contradicted the mental models I discovered after the ’¼ürst round. For example, one preliminary ’¼ünding from the ’¼ürst round was that people rarely talked about the creation of computer viruses; it was unclear how they would react to a computer virus that was created by people for a purpose. In the virus scenario, I informed the respondents that the virus in question was written by the Russian ma’¼üa. This fact was taken out of recent news linking the Russian ma’¼üa to widespread viruses such as Netsky, Bagle, and Storm.{3} Once I had all of the data collected and transcribed, I conducted both inductive and deductive coding of the data to look both for predetermined and emergent themes [23]. I began with a short list of ma jor themes I expected to see from my pilot interviews, such as information about viruses, hackers, identity theft, countermeasures, and sources of information. I identi’¼üed and labeled (coded) instances when the respondents discussed these themes. I then expanded the list of codes as I noticed interesting themes and patterns emerging. Once all of the data was coded, I summarized the data on each topic by building a data matrix [23].{4} This data matrix helped me to identify basic patterns in the data across sub jects, to check for representativeness, and to look┬Āfor negative cases [24].

After building the initial summary matrices, I identi’¼üed patterns in the way respondents talked about each topic, paying speci’¼üc attention to word choices, metaphors employed, and explicit content of statements. Speci’¼ücally, I looked for themes in which users di’¼Ćer in their opinions (negative case analysis). These themes became the building blocks for the mental models. I built a second matrix that matched sub jects with these features of mental models.{5} This second matrix allowed me to identify and characterize the various mental models that I encountered. Table 7 in the Appendix shows which participants from Round 2 had each of the 8 models. A similar table was developed for the Round 1 participants. I then took the description of the model back to the data, veri’¼üed when the model description accurately represented the respondents descriptions, and looked for contradictory evidence and negative cases [24]. This allowed me to update the models with new information or insights garnered by following up on surprises and incorporating outliers. This was an iterative process; I continued updating model descriptions, looking for negative cases, and checking for representativeness until I felt that the model descriptions I had accurately represented the data. In this process, I developed further matrices as data visualizations, some of which appear in my descriptions below.

{3} http://www.linuxinsider.com/story/33127.html?wlc=1244817301

{4} A fragment of this matrix can be seen in Table 5 in the Appendix.

{5} A fragment of this matrix is Table 6 in the Appendix.

2. FOLK MODELS of SECURITY THREATS

I identi’¼üed a number of di’¼Ćerent folk models in the data. Every folk model was shared by multiple respondents in this study. The purpose of qualitative research is not to generalize to a population; rather, it is to explore phenomenon in depth. To avoid misleading readers, I do not report how many users possessed each folk model. Instead, I describe the full range of folk models I observed. I divide the folk models into two broad categories based on a distinction that most sub jects possessed: 1) models about viruses, spyware, adware, and other forms of malware which everyone refered to under the umbrella term ŌĆśvirusŌĆÖ; and 2) models about the attackers, referred to as ŌĆśhackers,ŌĆÖ and the threat of ŌĆśbreaking in toŌĆÖ a computer. Each respondent had at least one model from each of the two categories. For example, Nicole {6} believed that viruses were mischievous, and hackers are criminals who target big ’¼üsh. These models are not necessarily mutually exclusive. For example, a few respondents talked about di’¼Ćerent types of hackers and would describe more than one folk model of hackers. Note that by listing and describing these folk models, in no way do I intend to imply that these models are incorrect or bad in any way. They are all certainly incomplete, and do not exactly correspond to the way malicious software or malicious computer users behave. But, as Kempton [19] learned in his study of home thermostats, what is important is not how accurate the model is but how well it serves the needs of the home computer user in making security decisions. Additionally, there is not ŌĆ£correctŌĆØ model that can serve as a comparison. Even security experts will disagree as to the correct way to think about viruses or hackers. To show an extreme example, Medin et al. [22] conducted a study of expert ’¼üshermen in the Northwoods of Wisconsin. They looked at the mental models of both Native American ’¼üshermen and of majority-culture ’¼üshermen. Despite both groups being experts, the two groups showed dramatic di’¼Ćerences in the way ’¼üsh were categorized and classi’¼üed. Majority-culture ’¼üshermen grouped ’¼üsh into standard taxonomic and goal-oriented groupings, while Native American ’¼üshermen groups ’¼üsh mostly by ecological niche. This illustrates how even experts can have dramatically di’¼Ćerent mental models of the same phenomenon, and any single expertŌĆÖs model is not necessarily correct. However, experts and novices do tend to have very di’¼Ćerent models; Asgharpour et al. [3] found strong di’¼Ćerences between expert and novice computer users in their mental models of security.

Common Elements of Folk Models

Most respondents made a distinction between ŌĆśvirusesŌĆÖ and ŌĆśhackers.ŌĆÖ To them, these are two separate threats that can both cause problems. Some people believed that viruses are created by hackers, but they still usually saw them as distinct threats. A few respondents realized this and tried to describe the di’¼Ćerence; for example at one point in the interview Irving tries to explain the distinction by saying ŌĆ£The hacker is an individual hacking, while the virus is a program infecting.ŌĆØ After some thought, he clari’¼ües his idea of the di’¼Ćerence a bit: ŌĆ£So itŌĆÖs a di’¼Ćerence between something automatic and more personal.ŌĆØ This description is characteristic of how many respondents think about the di’¼Ćerence: viruses are usually more programatic and automatic, where hacking is more like manual labor, requiring the hacker to be sitting in front of a computer entering commands. This distinction between hackers and viruses is not something that most of the respondents had thought about; it existed in their mental model but not at a conscious level. Upon prompting, Dana decides that ŌĆ£I guess if they hack into your system and get a virus on there, it’s gonna be the same thing.ŌĆØ She had never realized that they were distinct in her mind, but it makes sense to her that they might be related. She then goes on to ask the interviewer if she gets hacked, can she forward it on to other people? This also illustrates another common feature of these interviews. When exposed to new information, most of the respondents would extrapolate and try to apply that information to slightly di’¼Ćerent settings. When Dana was prompted to think about the relationship between viruses and hackers, she decided that they were more similar than she had previously realized. Then she began to apply ideas from one model (viruses spreading) to the other model (can hackers spread also?) by extrapolating from her current models. This is a common technique in human learning and sensemaking [25]. I suspect that many details of the mental models were formed in this way. Extrapolation is also useful for analysis; how respondents extrapolate from new information reveals details about mental models that are not consciously salient during interviews [8, 11]. During the interviews I used a number of prompts that were intended to challenge mental models and force users to extrapolate in order to help surface more elements of their mental models.

2.1 Models of Viruses and other Malware

All of the respondents had heard of computer viruses and possessed some mental model of their e’¼Ćects and transmission. The respondents focused their discussion primarily on the e’¼Ćects of viruses and the possible methods of transmission. In the second round of interviews, I prompted respondents to discuss how and why viruses are created by asking them to react to a number of hypothetical scenarios. These scenarios help me understand how the respondents apply these models to make security-relevant decisions.┬ĀAll of the respondents used the term ŌĆśvirusŌĆÖ as a catch-all term for malicious software. Everyone seemed to recognize that viruses are computer programs. Almost all of the respondents classify many di’¼Ćerent types of malicious software under this term: computer viruses, worms, tro jans, adware, spyware, and keyloggers were all mentioned as ŌĆśviruses.ŌĆÖ The respondents donŌĆÖt make the distinctions that most experts do; they just call any malicious computer program a ŌĆśvirus.ŌĆÖ Thanks to the term ŌĆśvirus,ŌĆÖ all of the respondents used some sort of medical terminology to describe the actions of malware. Getting malware on your computer means you have ŌĆścaughtŌĆÖ the virus, and your computer is ŌĆśinfected.ŌĆÖ Everyone who had a Mac seemed to believe that Macs are ŌĆśimmuneŌĆÖ to virus and hacking problems (but were worried anyway).

Overall, I found four distinct folk models of ŌĆśviruses.ŌĆÖ These models di’¼Ćered in a number of ways. One of the major differences is how well-speci’¼üed and detailed the model was, and therefore how useful the model was for making security-related decisions. One model was very under-speci’¼üed, labeling viruses as simply ŌĆśbad.ŌĆÖ Respondents with this model had trouble using it to make any kind of security-related decisions because the model didnŌĆÖt contain enough information to provide guidance. Two other models (the Mischief and Crime models) were fairly well-described, including how viruses are created and why, and what the ma jor e’¼Ćects of viruses are. Respondents with these models could use them to extrapolate many di’¼Ćerent situations and use them to make many security-related decisions on their computer. Table 1 summarizes the major di’¼Ćerences between the four models.

{6} All respondents have been given pseudonyms for anonymity.

2.1.1 Viruses are Generically ŌĆśBadŌĆÖ

A few subjects had a very under-developed model of viruses. These subjects knew that viruses cause problems, but these sub jects couldnŌĆÖt really describe the problems that viruses cause. They just knew that they were generically ŌĆśbadŌĆÖ to get and should be avoided.┬ĀRespondents with this model knew of a number of different ways that viruses are transmitted. These transmission methods seemed to be things that the subjects had heard about somewhere, but the respondents did not attempt to understand these or organize them into a more coherent mental model. Zoe believed that viruses can come from strange emails, or from ŌĆ£searching random thingsŌĆØ on the Internet. She says she had heard that blocking popups helps with viruses too, and seemed to believe that without questioning. Peggy had heard that viruses can come from ŌĆ£blinky ads like youŌĆÖve won a million bucks.ŌĆØ Respondents with this model are uniformly unconcerned with getting viruses: ŌĆ£I guess just my lack of really doing┬Āmuch on the Internet makes me feel like I’m safer.ŌĆØ (Zoe). A couple of people with this model use Macintosh computers, which they believe to be ŌĆ£immuneŌĆØ to computer viruses. Since they are immune, it seems that they have not bothered to form a more complete model of viruses. Since these users are not concerned with viruses, they do not take any precautions against being infected. These users believe that their current behavior doesnŌĆÖt really make them vulnerable, so they donŌĆÖt need to go to any extra e’¼Ćort. Only one respondent with this model uses an anti-virus program, but that is because it came installed on the computer. These respondents seem to recognize that anti-virus software might help, but are not concerned enough to purchase or install it.

2.1.2 Viruses are Buggy Software

One group of respondents saw computer viruses as an exceptionally bug-ridden form of regular computer software. In many ways, these respondents believe that viruses behave much like most of the other software that home users experience. But to be a virus, it has to be ŌĆśbadŌĆÖ in some additional way. Primarily, viruses are ŌĆśbadŌĆÖ in that they┬Āare poorly written software. They lead to a multitude of bugs and other errors in the computer. They bring out bugs in other pieces of software. They tend to have more bugs, and worse bugs, than most other pieces of software. But all of the e’¼Ćects they cause are the same types of e’¼Ćects you get from buggy software: viruses can cause computers to crash, or to ŌĆ£boot me outŌĆØ (Erica) of applications that are running; viruses can accidentally delete or ŌĆ£wipe outŌĆØ information (Christine and Erica); they can erase important system ’¼üles. In general, the computer just ŌĆ£doesnŌĆÖt function properlyŌĆØ (Erica) when it has a virus. Just like normal software, viruses must be intentionally placed on the computer and executed. Viruses do not just appear on a computer. Rather than ŌĆścatchingŌĆÖ a virus, computers are actively infected, though often this infection is accidental. Some viruses come in the form of email attachments. But they are not a threat unless you actually ŌĆ£clickŌĆØ on the attachment to run it. If you are careful about what you click on, then you wonŌĆÖt get the virus. Another example is that viruses can be downloaded from websites, much like many other applications. Erica believes that sometimes downloading games can end up causing you to download a virus. But still, intentional downloading and execution is necessary to be infected with a virus, much the same way that intentional downloading and execution is necessary to run programs from the Internet. Respondents with this model did not feel that they needed to exert a lot of e’¼Ćort to protect themselves from viruses. Mostly, these users tried to not download and execute programs that they didnŌĆÖt trust. Sarah intentionally ŌĆ£limits herself ŌĆØ by not downloading any programs from the Internet so she doesnŌĆÖt get a virus. Since viruses must be actively executed, anti-virus program are not important. As long as no one downloads and runs programs from the Internet, no virus can get onto the computer. Therefore, anti-virus programs that detect and ’¼üx viruses arenŌĆÖt needed. However, two respondents with this model run anti-virus software just in case a virus is accidentally put on the computer. Overall, this is a somewhat underdeveloped mental model┬Āof viruses. Respondents who possessed this model had never really thought about how viruses are created, or why. When asked, they talk about how they havenŌĆÖt thought about it, and then make guesses about how ŌĆśbad peopleŌĆÖ might be the ones who create them. These respondents havenŌĆÖt put too much thought into their mental model of viruses; all of the e’¼Ćects they discuss are either e’¼Ćects they have seen┬Āor more extreme versions of bugs they have seen in other software. Christine says ŌĆ£I guess I would know [if I had a virus], wouldnŌĆÖt I?ŌĆØ presuming that any e’¼Ćects the virus has would be evident in the behavior of the computer. No connection is made between hackers and viruses; they are distinct and separate entities in the respondentŌĆÖs mind.

2.1.3 Viruses Cause Mischief

A good number of respondents believed that viruses are pieces of software that are intentionally annoying. Someone created the virus for the purpose of annoying computer users and causing mischief. Viruses sometimes have e’¼Ćects that are often much like extreme versions of annoying bugs: crashing your computer, deleting important ’¼üles so your computer wonŌĆÖt boot, etc. Often the e’¼Ćects of viruses are intentionally annoying such as displaying a skull and crossbones upon boot (Bob), displaying advertising popups (Floyd), or downloading lots of pornography (Dana). While these respondents believe that viruses are created to be annoying, they rarely have a well-developed idea of who created them. They donŌĆÖt naturally mention a creator for the viruses, just a reason why they are created. When pushed, these respondents will talk about how they are probably created by ŌĆ£hackersŌĆØ who ’¼üt the Gra’¼āti hacker model below. But the identity of the creator doesnŌĆÖt play much of a role in making security decisions with this model. Respondents with this model always believe that viruses can be ŌĆ£caughtŌĆØ by actively clicking on them and executing them. However, most respondents with this model also believe that viruses can be ŌĆ£caughtŌĆØ by simply visiting the wrong webpages. Infection here is very passive and can come from just from visiting the webpage. These webpages are often considered to be part of the ŌĆśbadŌĆÖ part of the Internet. Much like gra’¼āti appears in the ŌĆśbadŌĆÖ parts of cities, mischievous viruses are most prevalent on the bad parts of the Internet. While most everyone believes that care in clicking on attachments or downloads is important, these respondents also try to be careful about where they go on the Internet. One respondent (Floyd) tries to explain why: cookies are automatically put on your computer by websites, and therefore, viruses being automatically put on your computer could be related to this. These ŌĆśbadŌĆÖ parts of the Internet where you can easily contract viruses are frequently described as morally ambiguous webpages. Pornography is always considered shady, but some respondents also included entertainment websites where you can play games, and websites that have been on the news like ŌĆ£MySpaceBookŌĆØ (Gina). Some respondents believed that a ŌĆ£securedŌĆØ website would not lead to a virus, but Gail acknowledged that at some sites ŌĆ£maybe the protection wasnŌĆÖt working at those sites and they went bad.ŌĆØ (Note the passive tense; again, she has not thought about how site go bad or who causes them to go bad. She is just concerned with the outcome.)

2.1.4 Viruses Support Crime

Finally, some respondents believe that viruses are created to support criminal activities. Almost uniformly, these respondents believe that identity theft is the end goal of the criminals who create these viruses, and the viruses assist them by stealing personal and ’¼ünancial information from individual computers. For example, respondents with this model worry that viruses are looking for credit card numbers, bank account information, or other ’¼ünancial information stored on their computer. Since the main purpose of these viruses is to collect information, the respondents who have this model believe that viruses often remain undetected on computers. These viruses do not explicitly cause harm to the computer, and they do not cause bugs, crashes, or other problems. All they do is send information to criminals. Therefore, it is important to run an anti-virus program on a regular basis because it is possible to have a virus on your computer without knowing it. Since viruses donŌĆÖt harm your computer, backups are not necessary. People with this model believed that there are many different ways for these viruses to spread. Some viruses spread through downloads and attachments. Other viruses can spread ŌĆ£automatically,ŌĆØ without requiring any actions by the user of the computer. Also, some people believe that hackers will install this type of virus onto the computer when they break in. Given this wide variety of transmission methods┬Āand the serious nature of identity theft, respondents with this model took many steps to try to stop these viruses. These users would work to keep their anti-virus up to date, purchasing new versions on a regular basis. Often, they would notice when the anti-virus would conduct a scan of their computer and check the results. Valerie would even turn her computer o’¼Ć when it is not in use to avoid potential problems with viruses.

2.1.5 Multiple Types of Viruses

A couple of respondents discussed multiple types of viruses on the Internet. These respondents believed that some viruses are mischievous and cause annoying problems, while other viruses support crime and are di’¼ācult to detect. All users that talked about more than one type of virus talked about both of the previous two virus folk models: the mischievous viruses and the criminal viruses. One respondent, Jack, also talked about a third type of virus that was created by anti-virus companies, but he seemed like he felt this was a conspiracy theory, and consequently didnŌĆÖt take that suggestion very seriously. For the respondents with multiple models, they generally would take all of the precautions that either model would predict. For example, they would make regular backups in case they caught a mischievous virus that damaged their computer, but they also would regularly run their anti-virus program to detect the criminal viruses that donŌĆÖt have noticeable e’¼Ćects. This fact suggests that information sharing between users may be bene’¼ücial; when users believe in multiple types of viruses, they take appropriate steps to protect against all types.

2.2 Models of Hackers and Break-ins

The second ma jor category of folk models describe the attackers, or the people who cause Internet security problems. These attackers are always given the name ŌĆ£hackers,ŌĆØ and all of the respondents seemed to have some concept of who these people were and what they did. The term ŌĆ£hackerŌĆØ was applied to describe anyone who does bad things on the Internet, no matter who they are or how they work. All of the respondents describe the main threat that hackers pose as ŌĆ£breaking inŌĆØ to their computer. They would disagree as to why a hacker would want to ŌĆ£break inŌĆØ to a computer, and to which computers they would target for their break ins, but everyone agreed on the terminology for this basic action. To the respondents, breaking in to a computer meant that the hacker could then use the computer as if they were sitting in front of it, and could cause a number of di’¼Ćerent things to happen to the computer. Many respondents stated that they did not understand how this worked, but they still believed it was possible. My respondents described four distinct folk models of hackers. These models di’¼Ćered mainly in who they believed these hackers were, what they believed motivated these people, and how they chose which computers to break in to. Table 2 summarizes the four folk models of hackers.

2.2.1 Hackers are Digital Graf’¼üti Artists

One group of respondents believe that hackers are technically skilled people causing mischief. There is a collection of individuals, usually called ŌĆ£hackers,ŌĆØ that use computers to cause a technological version of mischief. Often these users are envisioned as ŌĆ£college-age computer typesŌĆØ (Kenneth). They see hacking computers as sort of digital gra’¼āti; hackers break in to computers and intentionally cause problems so they can show o’¼Ć to their friends. Victim computers are┬Āa canvas for their art. When respondents with this model talked about hackers, they usually focused on two features: strong technical skills and the lack of proper moral restraint. Strong technical skills provide the motivation; hackers do it ŌĆØfor sheer sportŌĆØ (Lorna) or to demonstrate technical prowess (Hayley). Some respondents envision a competition between hackers, where more sophisticated viruses or hacks ŌĆ£prove youŌĆÖre a better hackerŌĆØ (Kenneth); others see creating viruses and hacking as part of ŌĆ£learning about the InternetŌĆØ (Jack). Lack of moral restraint is what makes them di’¼Ćerent than others with technical skills; hackers are sometimes described as people as maladjusted individuals who ŌĆ£want to hurt others for no reason.ŌĆØ (Dana) Respondents will describe hackers as ŌĆØmiserableŌĆØ people. They feel that hackers do what they do for no good reason, or at least no reason they can understand. Hackers are believed to be lone individuals; while they may have hacker friends, they are not part of any organization. Users with this model often focus on the identity of the hacker. This identity ŌĆō a young computer geek with poor morals ŌĆō is much more developed in their mind than the resulting behavior of the hacker. As such, people with this model can usually talk clearly and give examples of who hackers are, but seem less con’¼üdent in information about the resulting break-ins that happen. These hackers like to break stu’¼Ć on the computer to create havoc. They will intentionally upload viruses to computers to cause mayhem. Many sub jects believe that hackers intentionally cause computers harm; for example Dana believes that hackers will ŌĆ£fry your hard drive.ŌĆØ (Dana) Hackers might install software to let them control your computer; Jack talked about how a hacker would use his instant messenger to send strange messages to his friends. These mischievous hackers were seen as not targetting speci’¼üc individuals, but rather choosing random strangers to target. This is much like gra’¼āti; the hackers need a canvas and choose whatever computer they happen to come upon. Because of this, the respondents felt like they might become a victim of this type of hacking at any time. Often, victims like this felt like there wasnŌĆÖt much they could to do protect themselves from this type of hacking. This was because respondents didnŌĆÖt understand how hackers were able to break into computers, so they didnŌĆÖt know what could be done to stop it. This would lead to a feeling of futility; ŌĆ£if they are going to get in, theyŌĆÖre going to get in.ŌĆØ (Hayley) This feeling of futility echoes similar statements discussed by Dourish et al. [12].

2.2.2 Hackers are Burglars Who Break Into Computers for Criminal Purposes

Another set of respondents believe that hackers are criminals that happen to use computers to commit their crimes. Other than the use of the computer, they share a lot in common with other professional criminals: they are motivated by ’¼ünancial gain, and they can do what they do because they lack common morals. They would ŌĆ£break intoŌĆØ computers to look for information much like a burglar will break into┬Āhouses to look for valuables. The most salient part of this folk model is the behavior of the hacker; the respondents could talk in detail about what the hackers were looking for but spoke very little about the identity of the hacker. Almost exclusively, this criminal activity is some form of identity theft. For example, respondents believe that if a┬Āhacker obtains their credit card number, for example, then that hacker can make fraudulent charges with it. But the respondents werenŌĆÖt always sure what kind of information the hacker was speci’¼ücally looking for; they just described it as information the hacker could use to make money. Ivan talked about how hackers would look around the computer much like a thief might rummage around in an attic, looking for something useful. Erica used a di’¼Ćerent metaphor, saying that hackers would ŌĆ£take a digital photo of everything on my computerŌĆØ and look in it for useful identity information. Usually, the respondents envision the hacker himself using this ’¼ünancial information (as opposed to selling the information to others). Since hackers target information, the respondents believe that computers are not harmed by the break-ins. Hackers┬Ālook for information, but do not harm the computer. They simply rummage around, ŌĆ£take a digital photo,ŌĆØ possibly install monitoring software, and leave. The computer continues to work as it did before. The main concern of the respondents is how the hacker might use the information that they steal. These hackers choose victims opportunistically; much like a mugger chooses his victims, these hackers will break into any computers they run across to look for valuable information. Or, more accurately, the respondents donŌĆÖt have a good model of how hackers choose, and believe that there is a decent chance that they will be a victim someday. Gail talks about how hackers are opportunistic, saying ŌĆ£next time I go to their site theyŌĆÖll nab me.ŌĆØ Hayley believes that they just┬Āchoose computers to attack without knowing much about who owns them. Respondents with this belief are willing to take steps to protect themselves from hackers to avoid becoming a victim. Gail tries to avoid going websites sheŌĆÖs not familiar with to prevent hackers from discovering her. Jack is careful to always sign out of accounts and websites when he is ’¼ünished. Hayley shuts o’¼Ć her computer when she isnŌĆÖt using it so hackers cannot break into it.

2.2.3 Hackers are Criminals who Target Big Fish

Another group of respondents had a conceptually similar model. This group also believes that hackers are Internet criminals who are looking for information to conduct identity theft. However, this group has thought more about how these hackers can best accomplish this goal, and have come to some di’¼Ćerent conclusions. These respondents believe in ŌĆ£massive hacker groupsŌĆØ (Hayley) and other forms of organization and coordination among criminal hackers. Most tellingly, this group believes that hackers only target the ŌĆ£big ’¼üsh.ŌĆØ Hackers primarily break into computers of important and rich people in order to maximize their gains. Every respondent who holds this model believes that he or she is not likely to be a victim because he or she is not a big enough ’¼üsh. They believe that hackers are unlikely to ever target them, and therefore they were safe from hacking. Irving believe that ŌĆ£IŌĆÖm small potatoes and no one is going to bother me.ŌĆØ They often talk about how other people are more likely targets: ŌĆ£Maybe if I had a lot of moneyŌĆØ (Floyd) or ŌĆ£like if I were a bank executiveŌĆØ (Erica). For these respondents, protecting against hackers isnŌĆÖt a high priority. Mostly they ’¼ünd reasons to trust existing security precautions rather than taking extra steps to protect themselves. For example, Irving talked about how he trusts his pre-installed ’¼ürewall program to protect him. Both Irving and Floyd trust their passwords to protect them. Basically, their actions indicate that they believe in the speed bump theory: by making it slightly hard for hackers using standard security technologies, hackers will decide it isnŌĆÖt worthwhile to target them.

2.2.4 Hackers are Contractors Who Support Criminals

Finally, there is a sort of hybrid model of hackers. In this view, hackers the people are very similar to the mischievous gra’¼āti-hackers from above: they are college-age, technically skilled individuals. However, their motivations are more intentional and criminal. These hackers are out to steal personal and ’¼ünancial information from people. Users with this model show evidence of more e’¼Ćort in thinking through their mental model and integrating the various sources of information they have. This model can be seen as a hybrid of the mischievous gra’¼āti-hacker model and the criminal hacker model, integrated into a coherent form by combining the most salient part of the mischievous┬Āmodel (the identity of the hacker) and the most salient part of the criminal model (the criminal activities). Also, everyone who had this model expressed a concern about how hacking works. Kenneth stated that he doesnŌĆÖt understand how someone can break into a computer without sitting in front of it. Lorna wondered how you can start a program running; she feels you have to be in front of the computer to┬Ādo that. This indicates that these respondents are actively trying to integrate the information they have about hackers into a coherent model of hacker behavior. Since these hackers are ’¼ürst and foremost young technical people, the respondents believe that these hackers are not likely to be identity thieves. They believe that the hackers are more likely to sell this identity information for others to use. Since the hackers just want to sell information, the respondents reason, they are more likely to target large databases of identity information such as banks or retailers like Amazon.com. Respondents with this model believed that hackers werenŌĆÖt really their problem. Since these hackers tended to target larger institutions like banks or e-commerce websites, their own personal computers werenŌĆÖt in danger. Therefore, no e’¼Ćort was needed to secure their personal computers. However, all respondents with this model expressed a strong concern for who they do business with online. These respondents would only make purchases or provide personal information to institutions they trusted to get the security right and ’¼ügure out how to be protected against hackers. These users were highly sensitive to third parties possessing their data.

2.2.5 Multiple Types of Hackers

Some respondents believed that there were multiple types of hackers. Most of the time, these respondents would believe that some hackers are the mischievous gra’¼āti-hackers and that other hackers are criminal hackers (using either the burglar or big ’¼üsh model, but not both). These respondents would then try to make the e’¼Ćort to protect themselves from both types of hacker threats as necessary. It seems that there is some amount of cognitive dissonance that occurs when respondents hear about both mischievous hackers and criminal hackers. There are two ways that respondents resolve this: the simplest way to resolve this is to believe that some hackers are mischievous and other hackers are criminals, and consequently keep the models separate; a more complicated way is to try to integrate the two models into one coherent belief about hackers. This latter option┬Āinvolves a lot of e’¼Ćort making sense of the new folk model that is not as clear or as commonly shared as the mischievous and criminal models. The ŌĆścontractorŌĆÖ model of hackers is the result of this integration of the two types of hackers.

3. FOLLOWING SECURITY ADVICE

Computer security experts have been providing security advice to home computer users for many years now. There are many websites devoted to doling out security advice, and numerous technical support forums where home computer users can ask security-related questions. There has been much e’¼Ćort to simplify security advice so regular computer users can easily understand and follow this advice.┬ĀHowever, many home computer users still do not follow this advice. This is evident from the large number of security problems that plague home computers. There is a disagreement among security experts as to why this advice isnŌĆÖt followed. Some experts seem to believe that home users do not understand the security advice, and therefore more education is needed. Others seem to believe that home users are simply incapable of consistently making good security┬Ādecisions [10]. However, none of these explanations explain which advice does get followed and which advice does not. The folk models described above begin to provide an explanation of which expert advice home computer users choose to follow, and which advice to ignore. By better understanding why people choose to ignore certain pieces of advice, we can better craft that advice and technologies to have a greater e’¼Ćect. In Table 3, I list 12 common pieces of security advice for home computer users. This advice was collected from the Microsoft Security at Home website {7}, the CERT Home Computer Security website {8}, and the US-CERT Cyber-Security Tips website {9}, and much of this advice is duplicated across websites. This advice represents the distilled wisdom on many computer security experts. This table then summarizes, for each folk model, whether that advice is important to follow, helpful but not essential, or not necessary to follow. To me, the most interesting entries indicate when users believe that a piece of security advice is not necessary to follow (labeled ŌĆśxxŌĆÖ in the table). These entries show how home computer users apply their folk models to determine for themselves whether a given piece of advice is important. Also interesting are the entries labeled ŌĆś??ŌĆÖ; these entries indicate places where users believe that the advice will help with security, but do not see the advice as so important that it must always be followed. Often users will decide that following advice labeled with ŌĆś??ŌĆÖ is too costly in terms of e’¼Ćort or money, and decide to ignore it. Advice labeled ŌĆś!!ŌĆÖ is extremely important, and the respondents feel that it should never be ignored, even if following it is inconvenient, costly, or di’¼ācult.

{7} http://www.microsoft.com/protect/default.mspx, retrieved July 5, 2009

{8} http://www.cert.org/homeusers/HomeComputerSecurity/, retrieved July 5, 2009

{9} http://www.us- cert.gov/cas/tips/, retrieved July 5, 2009

3.1 Anti-Virus Use

Advice 1ŌĆō3 has to do with anti-virus technology: Advice #1 states that anti-virus software should be used; #2 states that the virus signatures need to be constantly updated to be able to detect current viruses; and #3 states that the anti-virus software should regularly scan a computer to detect viruses. All of these are best practices for using anti-virus software. Respondents mostly use their folk models of viruses to make decisions about anti-virus use, for obvious reasons. Respondents who believe that viruses are just buggy software also believe it is not necessary to run anti-virus. They think they can keep viruses o’¼Ć of their computer by controlling what gets installed on their computer; they believe viruses need to be executed manually to infect a computer, and if they never execute one then they donŌĆÖt need anti-virus. Respondents with the under-developed folk model of viruses, who refer to viruses as generically ŌĆśbad,ŌĆÖ also do not use anti- virus software. These people understand that viruses are harmful and that anti-virus software can stop them. However, they have never really thought about speci’¼üc harms a virus might cause to them. Lacking an understanding of the threats and potential harm, they generally ’¼ünd it unnecessary to exert the e’¼Ćort to follow the best practices around anti-virus software. Finally, one group of respondents believe that anti-virus software can help stop hackers. Users with the burglar model of hackers believe that regular anti-virus scans can be important because these burglar-hackers will sometimes install viruses to collect personal information. Regular anti-virus use can help detect these hackers.

3.2 Other Security Software

Advice #4 concerns other types of security software; home computer users should run a ’¼ürewall or more comprehensive Internet security suite. I think that most of the respondents didnŌĆÖt understand what this security software did, other than a general notion of providing ŌĆ£security.ŌĆØ As such, no one included security software as an important component of their mental model. Respondents who held the gra’¼āti-hacker or burglar-hacker models believed that this software must help with hackers somehow, even though they donŌĆÖt know how, and would suggest installing it. But since they do not understand how it works, they do not consider it of vital importance. This highlights an opportunity for home user education; if these respondents better understood how security software helps protect against hackers, they might be more interested in using it and maintaining it. One interesting belief about this software comes from the respondents who believe hackers only go after big ’¼üsh. For these respondents, security software can serve as a speed-bump that discourages hackers from casually breaking into their computer. For these people, they donŌĆÖt care exactly how it works as long as it does something.

3.3 Email Security

Advice #5 is the only piece of advice about email on my list. It states that you shouldnŌĆÖt open attachments from people you donŌĆÖt recognize. Everyone in my sample was familiar with this advice and had taken it to heart. Everyone believed that viruses can be transmitted through email attachments, and therefore not clicking on unknown attachments can help prevent viruses.

3.4 Web Browsing

Advice 6-9 all deal with security behaviors while browsing the web. Advice #6 states that users need to ensure that they only download and run programs from trustworthy sources. Many types of malware are spread through downloads. #7 states that users should only browse web-pages from trustworthy sources. There are many types of┬Āmalicious websites such as phishing websites, and some websites can spread malware simply by visiting the site and executing the javascript on the website. #8 states that users should disable scripting like Java and JavaScript in their web browsers. Often there are vulnerabilities in these scripts, and some malware uses these vulnerabilities to spread. And #9 suggests using good passwords so attackers cannot guess┬Ātheir way into your accounts. Overall, many respondents would agree with most of this advice. However, no one seemed to understand the advice about web scripts; indeed, no one seemed to even understand what a web script was. Advice #8 was largely ignored because it wasnŌĆÖt understood. Everyone understood the need for care in choosing what to download. Downloads were strongly associated with viruses in most respondentsŌĆÖ minds. However, only users with well- developed models of viruses (the Mischief and Support Crime models) believed that viruses can be ŌĆ£caughtŌĆØ simply by browsing web pages. People who believed that viruses were buggy software didnŌĆÖt see browsing as dangerous because they werenŌĆÖt actively clicking on anything to run it. While all of the respondents expressed some knowledge of the importance of passwords, few exerted extra e’¼Ćort to make good passwords. Everyone understood that, in general, passwords are important, but they couldnŌĆÖt explain why. Respondents with the gra’¼āti hacker model would sometimes put extra e’¼Ćort into their passwords so that mischievous hackers couldnŌĆÖt mess up their accounts. And respondents who believed that hackers only target big ’¼üsh thought that passwords could be an e’¼Ćective speed bump to prevent hackers from casually targeting them. Respondents who believed in hackers as contractors to criminals uniformly believed that they were not targets of hackers and were therefore safe. However, they were careful in choosing which websites to do business with. Since these hackers targeted web businesses with lots of personal or ’¼ünancial information, it is important to only do business with websites that are trusted to be secure.

3.5 Computer Maintenance

Finally, Advice 10-12 concerns computer maintenance. Advice #10 suggests that users make regular backups in case some of their data is lost or corrupted. This is good advice for both security and non-security reasons. #11 states that it is important to keep the system patched with the latest updates to protect against known vulnerabilities that hackers and viruses can exploit. And #12 echoes the old maxim that the most secure machine is one that is turned o’¼Ć.┬ĀDi’¼Ćerent models had dramatically di’¼Ćerent suggestions as to which types of maintenance are important. For example, mischievous viruses and gra’¼āti hackers can cause data loss, so users with those models feel that backups are very important. But users who believe in more criminal viruses and hackers donŌĆÖt feel that backups are necessary; hackers and viruses steal information but donŌĆÖt delete it. Patching is an important piece of advice, since hackers and viruses need vulnerabilities to exploit. Most respondents only experience patches through the automatic updates feature in their operating system or applications. Respondents mostly associated the patching advice with hackers; respondents who felt that they would be a target of hackers also felt that patching was an import tool to stop hackers. Respondents who believed that viruses are buggy software feel that viruses also bring out more bugs in other software on the computer; patching the other software makes it more di’¼ācult for viruses to cause problems.

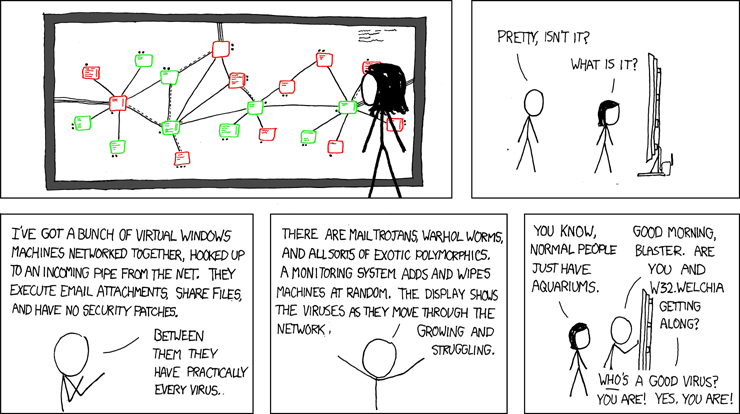

4. BOTNETS and the FOLK MODELS

This study was inspired by the recent rise of botnets as a strategy for malicious attackers. Understanding the folk models that home computer users employ in making security decisions sheds light on why botnets are so successful. Modern botnet software seems designed to take advantage of gaps and security weaknesses in multiple folk models. I begin by listed a number of stylized facts about botnets. These facts are not true about all botnets and botnet software, but these facts are true about many of the recent and large botnets.

1. Botnets attack third parties. When botnet viruses compromise a machine, that machine only serves as a worker. That machine is not the end goal of the attacker. The owner of the botnet intends to use that machine (and many others) to cause problems for third parties.

2. Botnets only want the Internet connection The only thing the botnet wants on the victim computer is the Internet connection. Botnet software rarely takes up much space on the hard drive, rarely looks at existing data on the hard drive, rarely occupies much memory, and usually donŌĆÖt use much CPU. Nothing that makes the computer unique is important.

3. Botnets donŌĆÖt directly harm the host computer. Most botnet software, once installed, does not directly cause harm to the machine it is running on. It consumes resources, but often botnet software is con’¼ügured to only use the resources at times they are otherwise unused (like running in the middle of the night). Some botnets even install patches and software updates so that other botnets cannot also use the computer.

4. Botnets spread automatically through vulnerabilities. Botnets often spread through automated compromises. They automatically scan the internet, compromise any vulnerable computers, and install copies of the botnet software on the compromised computers. No human intervention is required; neither the attacker nor the zombie owner nor the vulnerable computer owner need to be sitting at their computer at the time.

These stylized facts about botnets are not true for all botnets, but hold for many of the current, large, well-known, and well-studies botnets. I believe that botnet software effectively takes advantage of the limited and incomplete nature of the folk models of home computer users. Table 4 illustrates how each model does or does not incorporate the possibility of each of the stylized facts about botnets.

Botnets attack third parties.

None of the hacker models would predict that compromises would be used to attack third parties. Respondents┬Āwho held both the Big Fish mental model and the Contractor mental model believe that, since hackers donŌĆÖt want anything on the computer, they would target other computers and leave the unwanted computer alone. Respondents with the Burglar model believe that they might be a target, but only because the hacker wants something that might be on their computer. They would believe that once the hacker either ’¼ünds what they were looking for, or cannot ’¼ünd anything interesting, then the hacker would leave. Respondents with the Gra’¼āti model believe that hacking and vandalizing the computer is the end goal; it would never cross their mind to then use that computer to attack third parties. None of the respondents used their virus models to discuss potential third parties either. A couple of respondents with the Viruses are Bad model mentioned that once they got a virus, it might try to ŌĆ£spread.ŌĆØ However, they had no idea how this spreading might happen. Spreading is a form of harm to third parties; however, it is not the coordinated and intentional harm that botnets cause. Respondents who employed the other three virus models never mentioned the possibility of spreading beyond their computers. They were mostly focused on what the virus would do to them, and not to how it might a’¼Ćect others. Also, since they had an idea of how viruses spread, those ideas only involved spreading through webpages and email. They donŌĆÖt run a webpage on their computer, and no one acknowledged that a virus could use their email to send copies out.

Botnets only want the Internet connection.

No one in this study could conceive of a hacker or virus that only wanted the Internet connection of their computer. The three crime-based hacker models (Burglar, Big Fish, and Contractor ) all believe that hackers are actively looking for something stored on the computer. All the respondents with these three models believed that their computer had (or might have) some speci’¼üc and unique information that hackers wanted. Respondents with the Gra’¼āti model believed that computers are a sort of canvas for digital mischief. I would guess that they might believe that botnet owners would only want the Internet connection; they believe there is nothing unique about their computer that makes hackers want to do digitial gra’¼āti on their computer. None of the virus models would have anything to say about this fact. Respondents with the Viruses are Bad model and the Buggy Software models didnŌĆÖt attribute any intentionality to viruses. Respondents with the Mischief and Support Crime models believed viruses were created for a reason, but didnŌĆÖt seem to think about how using the computer to spread.

Botnets donŌĆÖt harm the host computer.

This is the one stylized fact on this list that any respondents explicitly mentioned. Respondents with the Supports Crime model believe that viruses might try to hide on the computer and not display any outward signs of their presence. Respondents who employ one of the other three virus models would ’¼ünd this strange; to them, viruses always create visible e’¼Ćects. To users with the Mischief model, these┬Āvisible e’¼Ćects are the main point of the virus! Additionally, the three folk models of hackers that relate to crime all include the idea that a ŌĆśbreak inŌĆÖ by hackers might not harm the computer. To these respondents, since hackers are just looking for information, they donŌĆÖt necessarily want to harm the computer. Respondents who use the Gra’¼āti model would ’¼ünd compromises that donŌĆÖt harm the computer to be strange, as the main purpose of ŌĆśbreaking intoŌĆÖ computers is to vandalize them.

Botnets spread automatically.

The idea that botnets spread without human intervention┬Āwould be strange to most of the respondents. Almost all of┬Āthe respondents believed that hackers had to be sitting in front of some computer somewhere when they were ŌĆ£breaking intoŌĆØ computers. Indeed, two of the respondents even asked the interviewer how it was possible to use a computer without being in front of it.┬ĀMost respondents belived that viruses generally also required some form of human intervention in order to spread. Viruses could be ŌĆścaughtŌĆÖ by visiting webpages, by down- loading software, or by clicking on emails. But all of those required someone to actively use the computer. Only one subject explicitly mentioned that viruses can ŌĆ£just happenŌĆØ (Jack). Respondents with the Viruses are Bad model understood that viruses could spread, but didnŌĆÖt know how. These respondents might not be surprised to learn that viruses can spread without human intervention, but probably havenŌĆÖt thought about it enough for that fact to be salient.

Summary

Botnets are extremely cleverly designed. They take advantage of home computer users by operating in a very different manor from the one conceived of by the respondents in this study. The only stylized fact listed above that a decent number of my respondents would recognize as a property of attacks is that botnets donŌĆÖt cause harm to the host computer. And not everyone in the study would believe this; some respondents had a mental model where not harming the computer wouldnŌĆÖt make sense. This analysis illustrates why eliminating botnets is so dif’¼ücult. Many home computer users probably have similar folk models to the ones possessed by the respondents in this study. If so, botnets look very di’¼Ćerent from the threats envisioned by many home computer users. Since home computer users do not see this as a potential threat, they do not take appropriate steps to protect themselves.

5. LIMITATIONS and MOVING FORWARD

Home computer users conceptualize security threats in multiple ways; consequently, users make di’¼Ćerent decisions based on their conceptualization. In my interviews, I found four distinct ways of thinking about malicious software as a security threat: the ŌĆśviruses are bad,ŌĆÖ ŌĆśbuggy software,ŌĆÖ ŌĆśviruses cause mischief,ŌĆÖ and ŌĆśviruses support crimeŌĆÖ models. I also found four more distinct ways of thinking about┬Āmalicious computer users as a threat: thinking of malicious others as ŌĆśgra’¼āti artists,ŌĆÖ ŌĆśburglars,ŌĆÖ ŌĆśinternet criminals who target big ’¼üsh,ŌĆÖ and ŌĆścontractors to organized crime.ŌĆÖ I did not use a generalizable sampling method. I am able to describe a number of di’¼Ćerent folk models, but I cannot estimate how prevalent each model is in the population. Such estimates would be useful in understanding nationwide vulnerability, but I leave these estimates to future work. I┬Āalso cannot say if my list of folk models is exhaustive ŌĆö there may be more models than I describe ŌĆö but it does represent the opinions of a variety of home computer users. Indeed, the snowball sampling method increases the chances that I will interview users with similar folk model despite the demographic heterogeneity of my sample.┬ĀPrevious literature [12, 15] was able to describe some basic security beliefs held by non-technical users; I provide structure to these theories by understanding how home computer users group these into semi-coherent mental models in their mind. My primary contribution with this study is an understanding of why users strictly follow some security advice from computer security experts and ignore other advice. This illustrates one major problem with security education e’¼Ćorts: they do not adequately explain the threats that home computer users face; rather they focus on practical, actionable advice. But without an understanding of threats, home computer users intentionally choose to ignore advice that they donŌĆÖt believe will help them. Security education e’¼Ćorts should focus not only on recommending what actions to take, but also emphasize why those actions are necessary. Following the advice of Kempton [19], security experts should not evaluate these folk models on the basis of correctness, but rather on how well they meet the needs of the folk that possess them. Likewise, when designing new security technologies, we should not attempt to force users into a more ŌĆścorrectŌĆÖ mental model; rather, we should design technologies that encourage users with limited folk models to be more secure. E’¼Ćective security technologies need to protect the user from attacks, but also expose potential threats to the user in a way the user understands so that he or she is motivated to use the technology appropriately.

6. ACKNOWLEDGMENTS

I appreciate the many comments and help during the whole pro ject from Je’¼Ć MacKie-Mason, Judy Olson, Mark Ackerman, and Brian Noble. Ti’¼Ćany Vienot was also extremely helpful in helping me explain my methodology clearly. This material is based upon work supported by the National Science Foundation under Grant No. CNS 0716196.

7. REFERENCES

[1] A. Adams and M. A. Sasse. Users are not the enemy. Communications of the ACM, 42(12):40ŌĆō46, December 1999.

[2] R. Anderson. Why cryptosystems fail. In CCS ŌĆÖ93: Proceedings of the 1st ACM conference on Computer and communications security, pages 215ŌĆō227. ACM Press, 1993.

[3] F. Asgharpour, D. Liu, and L. J. Camp. Mental models of computer security risks. In Workshop on the Economics of Information Security (WEIS), 2007.

[4] P. Bacher, T. Holz, M. Kotter, and G. Wicherski. Know your enemy: Tracking botnets. from the Honeynet Pro ject, March 2005.

[5] P. Barford and V. Yegneswaran. An inside look at botnets. In Special Workshop on Malware Detection, Advances in Information Security. Springer-Verlag, 2006.

[6] J. L. Camp. Mental models of privacy and security. Available at http://papers.ssrn.com/sol3/papers.cfm?abstract_id=922735, August 2006.

[7] L. J. Camp and C. Wolfram. Pricing security. In Proceedings of the Information Survivability Workshop, 2000.

[8] A. Collins and D. Gentner. How people construct mental models. In D. Holland and N. Quinn, editors, Cultural Models in Language and Thought. Cambridge University Press, 1987.

[9] R. Contu and M. Cheung. Market share: Security market, worldwide 2008. Gartner Report: http://www.gartner.com/it/page.jsp?id=1031712

, June 2009.

[10] L. F. Cranor. A framework for reasoning about the human in the loop. In Usability, Psychology, and Security Workshop. USENIX, 2008.

[11] R. DŌĆÖAndrade. The Development of Cognitive Anthropology. Cambridge University Press, 2005.

[12] P. Dourish, R. Grinter, J. D. de la Flor, and M. Joseph. Security in the wild: User strategies for managing security as an everyday, practical problem. Personal and Ubiquitous Computing, 8(6):391ŌĆō401, November 2004.

[13] D. M. Downs, I. Adema j, and A. M. Schuck. Internet security: Who is leaving the ŌĆÖvirtual doorŌĆÖ open and why? First Monday, 14(1-5), January 2009.

[14] R. E. Grinter, W. K. Edwards, M. W. Newman, and N. Ducheneaut. The work to make a home network work. In Proceedings of the 9th European Conference on Computer Supported Cooperative Work (ECSCW ŌĆÖ05), pages 469ŌĆō488, September 2005.

[15] J. Gross and M. B. Rosson. Looking for trouble: Understanding end user security management. In Symposium on Computer Human Interaction for the Management of Information Technology (CHIMIT), 2007.

[16] C. Herley. So long, and no thanks for all the externalities: The rational rejection of security advice by users. In Proceedings of the New Security Paradigms Workshop (NSPW), September 2009.

[17] P. Johnson-Laird, V. Girotto, , and P. Legrenzi. Mental models: a gentle guide for outsiders. Available at http://www.si.umich.edu/ICOS/gentleintro.html, 1998.

[18] P. N. Johnson-Laird. Mental models in cognitive science. Cognitive Science: A Multidisciplinary Journal, 4(1):71ŌĆō115, 1980.

[19] W. Kempton. Two theories of home heat control. Cognitive Science: A Multidisciplinary Journal, 10(1):75ŌĆō90, 1986.

[20] A. J. Kuzel. Sampling in qualitative inquiry. In B. Crabtree and W. L. Miller, editors, Doing Qualitative Research, chapter 2, pages 31ŌĆō44. Sage Publications, Inc., 1992.

[21] J. Marko’¼Ć. Attack of the zombie computers is a growing threat, experts say. New York Times, January 7 2007.

[22] D. Medin, N. Ross, S. Atran, D. Cox, J. Coley, J. Pro’¼ātt, and S. Blok. Folkbiology of freshwater ’¼üsh. Cognition, 99(3):237ŌĆō273, April 2006.

[23] M. B. Miles and M. Huberman. Qualitative Data Analysis: An Expanded Sourcebook. Sage Publications, Inc., 2nd edition edition, 1994. MilesHuberman1994.

[24] A. J. Onwuegbuzie and N. L. Leech. Validity and qualitative research: An oxymoron? Quality and Quantity, 41:233ŌĆō249, 2007.

[25] D. Russell, S. Card, P. Pirolli, and M. Ste’¼ük. The cost structure of sensemaking. In Proceedings of the INTERACT ŌĆÖ93 and CHI ŌĆÖ93 conference on Human factors in computing system, 1993.

[26] Trend Micro. Taxonomy of botnet threats. Whitepaper, November 2006.

APPENDIX

This appendix contains samples of data matrix displays that were developed during the data analysis phase of this project.

CONTACT

Rick Wash

http://www.rickwash.com

email : wash [at] msu [edu] edu

SEE ALSO: SCRIPTS

http://noscript.net/faq

Q: Why can I sometimes see┬Āabout:blank and/or┬Āwyciwyg: entries? What scripts are causing this?

A: ┬Ā┬Āabout:blank is the common URL designating empty (newly created) web documents.┬ĀA script can “live” there only if it has been injected (with┬Ādocument.write() or DOM manipulation, for instance) by another script which must have its own permissions to run. It usually happens when a master page creates (or statically contains) an empty sub-frame (automatically addressed as┬Āabout:blank) and then populates it using scripting. Hence, if the master page is not allowed, no script can be placed inside the┬Āabout:blank empty page and its “allowed” privileges will be void. Given the above, risks in keeping┬Āabout:blank allowed should be very low, if any. Moreover, some Firefox extensions need it to be allowed for scripting in order to work. Sometimes, especially on partially allowed sites, you may see also a┬Āwyciwyg: entry. It stands for “What You Cache Is What You Get”, and identifies pages whose content is generated by JavaScript code through functions likedocument.write(). If you can see such an entry, you already allowed the script generating it, hence the aboveabout:blank trust discussion applies to this situation as well.

Q: Why should I allow JavaScript, Java, Flash and plugin execution only for trusted sites?

A: ┬Ā┬ĀJavaScript,┬ĀJava and┬ĀFlash, even being very different technologies, do have one thing in common: they execute on your computer code coming from a remote site. All the three implement some kind of sandbox model, limiting the activities remote code can perform: e.g., sandboxed code shouldn’t read/write your local hard disk nor interact with the underlying operating system or external applications. Even if the sandboxes were bullet proof (not the case, read below) and even if you or your operating system wrap the whole browser with another sandbox (e.g. IE7+ on Vista or Sandboxie), the mere ability of running sandboxed code┬Āinside the browser can be exploited for malicious purposes, e.g. to steal important information you store or enter on the web (credit card numbers, email credentials and so on) or to “impersonate” you, e.g. in fake financial transactions, launching “cloud” attacks like┬ĀCross Site Scripting (XSS) or CSRF, with no need for escaping your browser or gaining privileges higher than a normal web page. This alone is enough reason to allow scripting on trusted sites only.┬ĀMoreover, many security exploits are aimed to achieve a “privilege escalation”, i.e. exploiting an implementation error of the sandbox to acquire greater privileges and perform nasty task like installing trojans, rootkits and keyloggers.

This kind of attack can target JavaScript, Java, Flash and other plugins as well: